Since 2005, Intel has been helmed by Paul Otellini, playing the role of captain in every crucial decision of the company. However, by 2013, Otellini stepped down (passing away in 2017), and the reins were handed over to Brian Krzanich. Over his 5-year tenure, Krzanich could be considered relatively lackluster. This period saw Intel continuously releasing products on 14 nm, 14+ nm, and 14++ nm technologies. Naturally, the Xeon chip segment was not immune to these effects. In 2018, Krzanich resigned, leaving Intel without a formal leader for several years. It wasn't until 2021, when Pat Gelsinger, an Intel veteran who had been mentored by former CEO Andrew Grove, returned, that things started to gradually return to their original trajectory.

Since 2005, Intel has been helmed by Paul Otellini, playing the role of captain in every crucial decision of the company. However, by 2013, Otellini stepped down (passing away in 2017), and the reins were handed over to Brian Krzanich. Over his 5-year tenure, Krzanich could be considered relatively lackluster. This period saw Intel continuously releasing products on 14 nm, 14+ nm, and 14++ nm technologies. Naturally, the Xeon chip segment was not immune to these effects. In 2018, Krzanich resigned, leaving Intel without a formal leader for several years. It wasn't until 2021, when Pat Gelsinger, an Intel veteran who had been mentored by former CEO Andrew Grove, returned, that things started to gradually return to their original trajectory. Intel's Xeon chip roadmap saw the introduction of Ice Lake (3rd generation) chips in 2021

Before delving into Emerald Rapids (EMR), let's briefly touch upon the naming conventions of various product lines. Currently, Intel offers 5 Xeon segments: Scalable Processor (SP), Max, W, D, and E. Among these, SP stands out as the top-tier line with full-fledged features. Hence, the appropriate commercial name for Emerald Rapids would be Xeon Scalable 5th generation. However, it's unclear why in the product presentations, Intel only refers to them as Intel 3rd generation (Ice Lake), 4th generation (Sapphire), and 5th generation (Emerald). This nomenclature might lead to confusion with older Xeon products or other lines like Max, W, D, and E. Nonetheless, for the sake of this discussion, let's overlook this detail. Xeon Scalable 5th generation or Xeon 5th generation can be considered as the same entity.

Referring to EMR as a bug fix or a Refresh for Sapphire Rapids (SPR) seems apt, as fundamentally, the microarchitecture of these two chip lines is identical (featuring Golden Cove and Raptor Cove cores, differing mainly in the processing units tailored for the client market, while server units remain the same). The primary difference lies in EMR offering 3 packaging configurations: XCC (high), MCC (medium), and LCC (low core count), whereas SPR only offers XCC and MCC. However, the XCC of SPR is based on 4 smaller die chiplets (which could be seen as LCC if Intel also utilizes this package). On the other hand, EMR's XCC is based on 2 MCC die chiplets. Both MCC dies share a configuration of 32 processing cores, allowing EMR's XCC configuration to reach up to 64 cores, whereas SPR's XCC only has 60 cores (since each Sapphire LCC die can have a maximum of 15 cores).

Intel's Xeon chip roadmap saw the introduction of Ice Lake (3rd generation) chips in 2021

Before delving into Emerald Rapids (EMR), let's briefly touch upon the naming conventions of various product lines. Currently, Intel offers 5 Xeon segments: Scalable Processor (SP), Max, W, D, and E. Among these, SP stands out as the top-tier line with full-fledged features. Hence, the appropriate commercial name for Emerald Rapids would be Xeon Scalable 5th generation. However, it's unclear why in the product presentations, Intel only refers to them as Intel 3rd generation (Ice Lake), 4th generation (Sapphire), and 5th generation (Emerald). This nomenclature might lead to confusion with older Xeon products or other lines like Max, W, D, and E. Nonetheless, for the sake of this discussion, let's overlook this detail. Xeon Scalable 5th generation or Xeon 5th generation can be considered as the same entity.

Referring to EMR as a bug fix or a Refresh for Sapphire Rapids (SPR) seems apt, as fundamentally, the microarchitecture of these two chip lines is identical (featuring Golden Cove and Raptor Cove cores, differing mainly in the processing units tailored for the client market, while server units remain the same). The primary difference lies in EMR offering 3 packaging configurations: XCC (high), MCC (medium), and LCC (low core count), whereas SPR only offers XCC and MCC. However, the XCC of SPR is based on 4 smaller die chiplets (which could be seen as LCC if Intel also utilizes this package). On the other hand, EMR's XCC is based on 2 MCC die chiplets. Both MCC dies share a configuration of 32 processing cores, allowing EMR's XCC configuration to reach up to 64 cores, whereas SPR's XCC only has 60 cores (since each Sapphire LCC die can have a maximum of 15 cores). Sapphire Rapids XCC is composed of 4 die chiplets. Emerald Rapids XCC utilizes only 2 die chiplets

Specifically, the LCC configuration of Emerald boasts up to 20 cores. If Intel were to employ the same packaging method of 4 LCC dies into 1 XCC configuration as with Sapphire, the completed chip could potentially achieve up to 80 processing cores. However, it seems that the Emerald LCC dies are not designed for interconnection like the Sapphire LCC dies, so this possibility may not exist. The highest-end configuration of EMR only edges out SPR by 4 processing cores (60 compared to 64 cores).

Sapphire Rapids XCC is composed of 4 die chiplets. Emerald Rapids XCC utilizes only 2 die chiplets

Specifically, the LCC configuration of Emerald boasts up to 20 cores. If Intel were to employ the same packaging method of 4 LCC dies into 1 XCC configuration as with Sapphire, the completed chip could potentially achieve up to 80 processing cores. However, it seems that the Emerald LCC dies are not designed for interconnection like the Sapphire LCC dies, so this possibility may not exist. The highest-end configuration of EMR only edges out SPR by 4 processing cores (60 compared to 64 cores). Intel not only alters the packaging and configuration linking with Emerald, but there are also other aspects making Xeon Scalable 5th generation more 'worthwhile' than the 4th generation. Specifically, the memory controller or DRAM memory control on Emerald reaches up to DDR5-5600, surpassing the 4800 MT/s of Sapphire. You might consider overclocking to achieve this figure. However, that's something not advisable with server chips, as a system worth tens of thousands of USD is a 'high-stakes' 'hot-handed' toy if issues arise. Especially if the datacenter needs to run continuously 24/7, then no one will try that overclocking game.

Intel not only alters the packaging and configuration linking with Emerald, but there are also other aspects making Xeon Scalable 5th generation more 'worthwhile' than the 4th generation. Specifically, the memory controller or DRAM memory control on Emerald reaches up to DDR5-5600, surpassing the 4800 MT/s of Sapphire. You might consider overclocking to achieve this figure. However, that's something not advisable with server chips, as a system worth tens of thousands of USD is a 'high-stakes' 'hot-handed' toy if issues arise. Especially if the datacenter needs to run continuously 24/7, then no one will try that overclocking game. The main differences between Emerald and Sapphire Rapids

Another significant change on EMR is the L3 cache capacity tripled compared to Sapphire - up to 320 MB. Of course, this comparison is with the standard version of Sapphire chip, without the inclusion of 64 GB HBMe cache memory. With the exponential increase in L3 cache capacity, although the number of processing cores is only slightly higher, the actual performance result of EMR will be significantly higher (similar to AMD's 3D V-Cache compared to the standard version).

Emerald will also feature higher UPI (Ultra Path Interconnect - interconnection between processing chips) bandwidth compared to Sapphire (20 GT/s versus 16 GT/s). This figure is significant for customers needing to build 2P (2 CPU) or more servers. A downside of EMR is that it does not support 4P or 8P configurations like Sapphire. However, this isn't particularly crucial as the market for 4P, 8P configurations is relatively narrow. Unless EMR is a long-term strategic product, not supporting 4P, 8P configurations only adds complexity to the design without significant practical benefits.

The main differences between Emerald and Sapphire Rapids

Another significant change on EMR is the L3 cache capacity tripled compared to Sapphire - up to 320 MB. Of course, this comparison is with the standard version of Sapphire chip, without the inclusion of 64 GB HBMe cache memory. With the exponential increase in L3 cache capacity, although the number of processing cores is only slightly higher, the actual performance result of EMR will be significantly higher (similar to AMD's 3D V-Cache compared to the standard version).

Emerald will also feature higher UPI (Ultra Path Interconnect - interconnection between processing chips) bandwidth compared to Sapphire (20 GT/s versus 16 GT/s). This figure is significant for customers needing to build 2P (2 CPU) or more servers. A downside of EMR is that it does not support 4P or 8P configurations like Sapphire. However, this isn't particularly crucial as the market for 4P, 8P configurations is relatively narrow. Unless EMR is a long-term strategic product, not supporting 4P, 8P configurations only adds complexity to the design without significant practical benefits. CXL on Emerald Rapids adds Type 3 support

Compute Express Link (CXL) on Emerald is also upgraded with support for Type 1, Type 2, and Type 3 (Sapphire only has Type 1 and 2). Type 1 allows peripheral processing chips (not CPUs) without memory to use CPU DRAM for data storage (via PCI Express bus). Type 2 applies to accelerator processors (GPU, FPGA, ASIC) with separate memory (GDDR or HBM) sharing them with the CPU and vice versa. Type 3 combines various memory types, enabling maximum enhancement/bandwidth access for all devices supporting this CXL protocol.

While not termed as a 'difference,' a highly useful aspect of EMR is its continued use of the LGA 4677 socket shared with SPR. Therefore, upgrading to Emerald from Sapphire would significantly reduce costs compared to purchasing entirely new. Of course, this depends on the long-term needs of each customer.

In reality, AI processing capabilities began with Sapphire using AMX ISA instructions optimized for 8-bit, 16-bit operations (float, bfloat, int). This stands out notably when comparing Sapphire Rapids to AMD's EPYC Genoa representatives (which aren't as optimized for AI). AI performance on Sapphire doubles to triples when AMX is enabled compared to when it's disabled, giving Intel a significant edge in this arena.

CXL on Emerald Rapids adds Type 3 support

Compute Express Link (CXL) on Emerald is also upgraded with support for Type 1, Type 2, and Type 3 (Sapphire only has Type 1 and 2). Type 1 allows peripheral processing chips (not CPUs) without memory to use CPU DRAM for data storage (via PCI Express bus). Type 2 applies to accelerator processors (GPU, FPGA, ASIC) with separate memory (GDDR or HBM) sharing them with the CPU and vice versa. Type 3 combines various memory types, enabling maximum enhancement/bandwidth access for all devices supporting this CXL protocol.

While not termed as a 'difference,' a highly useful aspect of EMR is its continued use of the LGA 4677 socket shared with SPR. Therefore, upgrading to Emerald from Sapphire would significantly reduce costs compared to purchasing entirely new. Of course, this depends on the long-term needs of each customer.

In reality, AI processing capabilities began with Sapphire using AMX ISA instructions optimized for 8-bit, 16-bit operations (float, bfloat, int). This stands out notably when comparing Sapphire Rapids to AMD's EPYC Genoa representatives (which aren't as optimized for AI). AI performance on Sapphire doubles to triples when AMX is enabled compared to when it's disabled, giving Intel a significant edge in this arena.

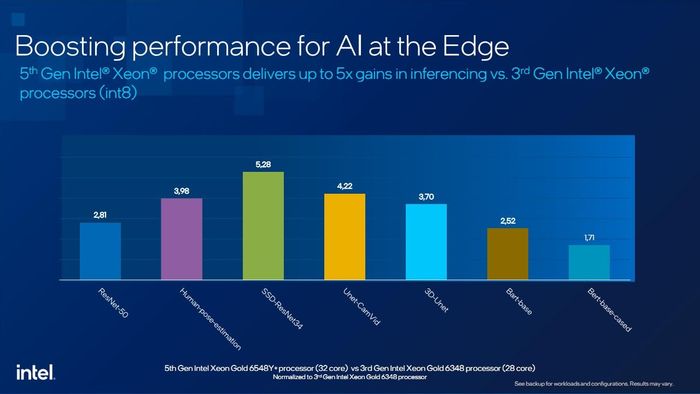

AI capabilities of Emerald improve over Sapphire Rapids

Similarly, it's no surprise that AI continues to be a focus on Emerald. More specifically, EMR adds an additional turbo frequency level compared to SPR. This detail allows the turbo process when running AMX instructions to be extended on Emerald, resulting in increased AMX processing speed compared to before. The L3 cache buffer tripled from Sapphire and higher DDR5 DRAM memory bandwidth also contribute significantly to this. According to Intel's announcement, AI capabilities of Emerald surpass Sapphire by 1.1 - 1.4 times. When compared to Ice Lake (without AMX), this performance difference is indisputable.

AI capabilities of Emerald improve over Sapphire Rapids

Similarly, it's no surprise that AI continues to be a focus on Emerald. More specifically, EMR adds an additional turbo frequency level compared to SPR. This detail allows the turbo process when running AMX instructions to be extended on Emerald, resulting in increased AMX processing speed compared to before. The L3 cache buffer tripled from Sapphire and higher DDR5 DRAM memory bandwidth also contribute significantly to this. According to Intel's announcement, AI capabilities of Emerald surpass Sapphire by 1.1 - 1.4 times. When compared to Ice Lake (without AMX), this performance difference is indisputable.

Comparison of AI performance between Emerald (5th) and Sapphire (4th) as well as Ice Lake (3rd)

AI is not the sole focus of Intel's attention. High Performance Computing (HPC) performance is also further 'fine-tuned.' However, these improvements are not highly practical as they focus on AVX512 instructions, which are not widely supported by many developers. Most developers still prefer AVX2 due to its stability and lower hardware requirements (many of Intel's chips also do not support AVX512).

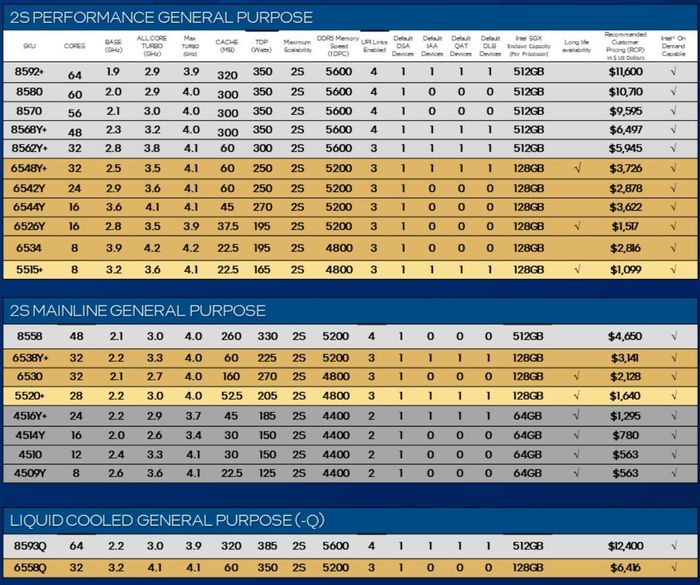

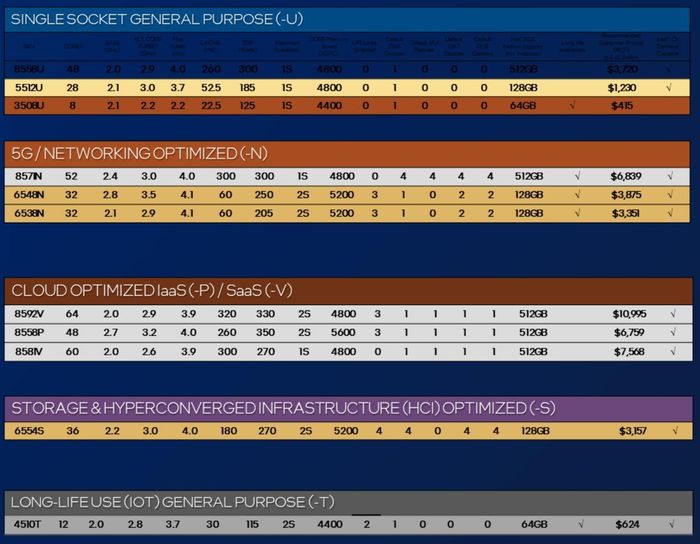

While being a Refresh for Sapphire Rapids, the launch of Emerald Rapids or Xeon Scalable 5th generation will still leave customers relatively puzzled with the number of models as well as their names. Fortunately, by grouping products according to usage needs, customers will find it easier to find the most suitable model to purchase. Although EMR has up to 8 product groups, in reality, only 2 main groups are of interest: the High-Performance group and the Mainline group.

Regarding naming, Xeon models can be divided into 4 parts - the first digit (1), the second digit (2), the last 2 digits (3), and special characters (4). Where (1) indicates product hierarchy (high, mid, low). (2) indicates the chip generation (3 for Ice Lake, 4 for Sapphire, 5 for Emerald). (3) reflects the chip's configuration. (4) denotes special features, e.g., Q for liquid cooling, U for 1P usage, N optimized for 5G networks...

Comparison of AI performance between Emerald (5th) and Sapphire (4th) as well as Ice Lake (3rd)

AI is not the sole focus of Intel's attention. High Performance Computing (HPC) performance is also further 'fine-tuned.' However, these improvements are not highly practical as they focus on AVX512 instructions, which are not widely supported by many developers. Most developers still prefer AVX2 due to its stability and lower hardware requirements (many of Intel's chips also do not support AVX512).

While being a Refresh for Sapphire Rapids, the launch of Emerald Rapids or Xeon Scalable 5th generation will still leave customers relatively puzzled with the number of models as well as their names. Fortunately, by grouping products according to usage needs, customers will find it easier to find the most suitable model to purchase. Although EMR has up to 8 product groups, in reality, only 2 main groups are of interest: the High-Performance group and the Mainline group.

Regarding naming, Xeon models can be divided into 4 parts - the first digit (1), the second digit (2), the last 2 digits (3), and special characters (4). Where (1) indicates product hierarchy (high, mid, low). (2) indicates the chip generation (3 for Ice Lake, 4 for Sapphire, 5 for Emerald). (3) reflects the chip's configuration. (4) denotes special features, e.g., Q for liquid cooling, U for 1P usage, N optimized for 5G networks...

Product list and pricing of Emerald Rapids chips

It's also not difficult to notice from the image above, the highest configuration EMR models are the most expensive. For instance, the flagship model Xeon 8592+ is priced at a hefty $11,600, boasting 64 cores with base/turbo/maximum turbo frequencies of 1.9/2.9/3.9 GHz respectively, supporting max DDR5 5600 MT/s. Mid-range models with moderate configurations like Xeon 6548Y+ with only 32 cores, corresponding frequencies of 2.5/3.5/4.1 GHz but only supporting max DDR5 5200 MT/s are offered at $3,726. For the most budget-friendly option - priced at only $415, buyers will get the Xeon 3508U chip with 8 cores, frequencies of 2.1/2.2/2.2 GHz, and modest DDR5 support at 4400 MT/s.

Product list and pricing of Emerald Rapids chips

It's also not difficult to notice from the image above, the highest configuration EMR models are the most expensive. For instance, the flagship model Xeon 8592+ is priced at a hefty $11,600, boasting 64 cores with base/turbo/maximum turbo frequencies of 1.9/2.9/3.9 GHz respectively, supporting max DDR5 5600 MT/s. Mid-range models with moderate configurations like Xeon 6548Y+ with only 32 cores, corresponding frequencies of 2.5/3.5/4.1 GHz but only supporting max DDR5 5200 MT/s are offered at $3,726. For the most budget-friendly option - priced at only $415, buyers will get the Xeon 3508U chip with 8 cores, frequencies of 2.1/2.2/2.2 GHz, and modest DDR5 support at 4400 MT/s.