Microsoft and Google: Pioneering the AI Revolution

The Art of Deception

Unveiling the Intricacies of ChatGPT

The Foundation of Success: Large Language Models

The Imperfections of AI: Unveiling the Truth Behind Chatbots

Even chatbots like ChatGPT advise users struggling with mental health issues about suicide, amplify racial and gender discrimination...

Many would argue that with AI, they can learn and correct mistakes, while many argue that the Internet is full of such nonsense and humans still thrive.

However, The Verge argues that there's no guarantee we can completely address this lying issue of chatbots because fabricating information is its technical foundation. Companies also can't spend all day searching for and fixing errors, while user safety relies on these AIs when they are widely deployed.

Correctness Defined

In the information retrieval market, pinpointing a single correct answer (One True Answer) is often perilous. This is what led to criticism of Google 10 years ago when they introduced the short answer feature at the top of the page with some user search information.

Reports from experts Chirag Shah and Emily M Bender show that AI makes correctness too clear-cut and simplistic, eliminating the ability for self-discovery, synthesizing information, and evaluating user content.

This poses an extreme danger as AI could influence user knowledge, setting standards for information instead of providing multiple answers for users to decide themselves.

In fact, Google has attempted to develop a mindset of 'No One Right Answer-NORA' but with AI development pressure from rivals like Microsoft, many are likely to disregard this standard to join the race for profit.

Internet Annihilation

AI's responses are often synthesized from the Internet, from various websites. However, the interaction volume that chatbots like ChatGPT receive doesn't return to these websites.

The consequence is that Internet sources, networking websites will experience reduced interaction and gradually die off due to lack of revenue.

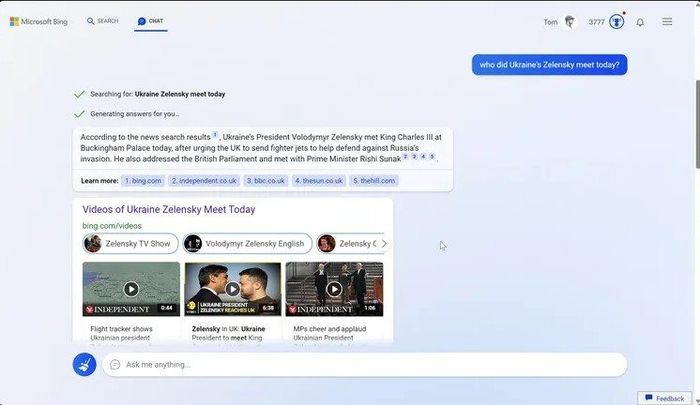

Microsoft argues they have integrated source information for the Bing tool for users to revisit the source page. However, AI is expected to provide crucial accurate answers, summarizing lengthy content. With this advantage, how many users would still want to return to the source page for verification?

Spread of Toxicity

Another factor making AI extremely dangerous is its uncontrollable self-learning ability. As AI adjusts responses to each user's question differently, malicious elements can spread harmful information, leading chatbots to believe they are correct answers.

This process is called 'Jaibreaking AI' and can be carried out without any programming knowledge. All the bad actors need is persuasive language.

This propagation method not only can feed harmful information to chatbots but also can demand them to impersonate as 'bad actors' to participate in a fictional role-playing game, thereby affecting the AI's judgment and self-learning ability.

AI Cultural Clash

Chatbots are platforms that don't truly understand what humans are saying, hence they produce answers that aren't truly accurate. However, this could potentially spark conflicts regarding culture, religion, or even politics if AI is widely deployed.

Users getting infuriated over culturally, gender-wise, and religiously offensive answers is something that will frequently happen when interacting with chatbots.

Currently, people are still lenient towards ChatGPT because it's still new, expected to improve and learn gradually. However, what if ChatGPT fixes answers to benefit the majority group of users and harm the minority?

Realizing this, Microsoft's Bing product has tried to integrate the content source of the answers, but who will verify these sources to be accurate?

In fact, the 'inhumanity' of AI and algorithms is one of the reasons why Facebook, the world's largest social network, has been criticized. Unconsciously posting and spreading controversial information by algorithms can lead the company into scandal, while destabilizing society.

This has occurred in the Brexit vote in the UK, the US presidential elections in 2016 and 2020.

Money Burn

Although there's no precise statistic, all experts in the field acknowledge that AI, chatbots will consume more resources, more than algorithms for regular search engines.

Firstly, companies will have to pour hundreds of millions of USD for each iteration in the computation cycle of AI. This is why Microsoft poured billions of USD into OpenAI, the parent company of ChatGPT, yet this startup is still heavily losing money despite transitioning from a non-profit organization to a business entity.

Fortune reported that in 2022, OpenAI achieved a revenue of 30 million USD but spent up to 416.45 million USD on server and data processing costs, over 89 million USD on employee salaries, and 39 million USD on other operating expenses. The total loss was 544.5 million USD.

Although ChatGPT has released a paid version, they cannot offset the cost of resources for each answer, especially when users ask too long and complicated questions.

Furthermore, this money burning makes Microsoft and Google become giants in the industry with overly high barriers. High costs but risky revenue make new players with limited budgets unable to enter.

Copyright

AI technology is advancing rapidly, but lawmakers are also gradually paying attention to it. Therefore, when governments issue regulations, AI may not be as appealing as people think.

For example, if the European Union (EU) mandates that AI-developing companies must pay copyright fees for the content they use, will Microsoft and Google still be profitable? If they pass these costs on to users or advertising businesses, will they still be more attractive than traditional search engines?

Then there's the story of privacy rights or countless other regulations that AI has not yet been considered. These are all major challenges, and the excitement of companies in this field may be too premature.

*Source: BI, The Verge