Apple Vision Pro is a device with 'spatial interaction' capability, and its interaction method differs from other devices. It entirely operates through gestures like eye tracking and hand gestures via cameras placed beneath the glass. In a recent developer session, Apple discussed specific gestures usable with Vision Pro and how some gestures will function.

Apple Vision Pro is a device with 'spatial interaction' capability, and its interaction method differs from other devices. It entirely operates through gestures like eye tracking and hand gestures via cameras placed beneath the glass. In a recent developer session, Apple discussed specific gestures usable with Vision Pro and how some gestures will function.

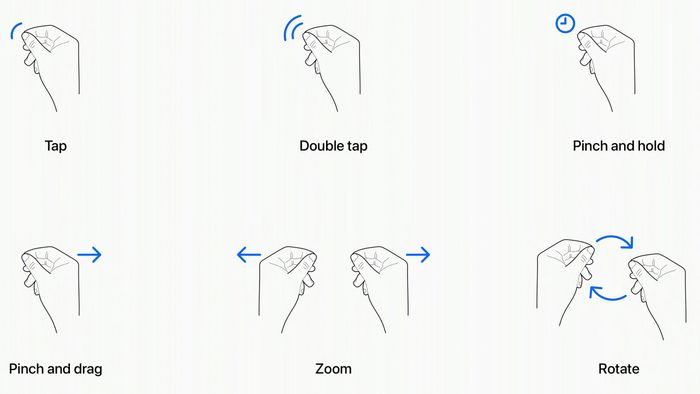

- Tap: Bring the thumb and index finger together to select apps or perform actions the user is looking at. It's equivalent to touchscreen interaction on the iPhone.

- Double Tap: Bringing the thumb and index finger together twice rapidly is akin to right-clicking on a Mac.

- Tap and Hold: This action is similar to long-pressing on an iPhone. It allows users to arrange apps or drag images, videos across different applications.

- Tap and Drag: This gesture can be used for scrolling and moving windows around. Users can scroll horizontally or vertically depending on the use case.

- Zoom: This gesture requires both hands. Users can pinch their fingers together to zoom in or spread them apart to zoom out. The size of app windows can also be adjusted by dragging from the corners, resembling the current feature on iPads.

- Rotate: This gesture involves pinching fingers together and rotating to control virtual objects, such as virtual characters like Mickey Mouse, virtual pets, virtual household items, etc. These gestures work in conjunction with eye movements through eye-tracking sensors.

The eye position will be crucial in determining the target for hand gestures. For instance, when you look at an app icon or other elements on the screen, the app or element will pop up, allowing you to continue operating and controlling them using gestures as described above. While these are the six primary system gestures introduced by Apple, developers can also create custom gestures for their own apps to make interactions easier. Developers need to ensure that the gestures they refine are common hand gestures that everyone can use, and these gestures can be performed frequently without causing hand fatigue.

The eye position will be crucial in determining the target for hand gestures. For instance, when you look at an app icon or other elements on the screen, the app or element will pop up, allowing you to continue operating and controlling them using gestures as described above. While these are the six primary system gestures introduced by Apple, developers can also create custom gestures for their own apps to make interactions easier. Developers need to ensure that the gestures they refine are common hand gestures that everyone can use, and these gestures can be performed frequently without causing hand fatigue. In addition to hand and eye gestures, Apple also mentioned that it will support other input accessories including keyboards, trackpads, mice, gaming controllers, etc. I find these operations quite natural and not too difficult to understand. The challenge for app developers on visionOS is whether the hand gestures they refine are really easy to operate because everyone has different hand movement habits. That's some information about hand gestures on Vision Pro.Do you think these hand gestures are easy to operate?Source: MacRumors

In addition to hand and eye gestures, Apple also mentioned that it will support other input accessories including keyboards, trackpads, mice, gaming controllers, etc. I find these operations quite natural and not too difficult to understand. The challenge for app developers on visionOS is whether the hand gestures they refine are really easy to operate because everyone has different hand movement habits. That's some information about hand gestures on Vision Pro.Do you think these hand gestures are easy to operate?Source: MacRumors