If you've ever been to college or submitted a list to Mytour, you’re probably familiar with the advice: "Don’t reference Wikipedia." Despite this reputation for being unreliable, Wikipedia is the fifth-largest website in the world, and it serves as a key resource for millions, if not billions, of users. However, with its vast scale, any flaws in Wikipedia can potentially affect a huge number of people. This makes the following concerns particularly significant.

10. Clear Bias

We begin with the most glaring issue. Because Wikipedia is edited and maintained by the public, personal biases often find their way into the content. A 2012 study analyzed over 28,000 Wiki articles on US politics, searching for keywords commonly associated with Democrats and Republicans. The results showed that, over time, articles tend to shift from left-leaning to more neutral, which seems positive. However, this neutrality often results from contributors engaging in edit wars, not a natural progression towards fairness. In fact, a follow-up study in 2016 revealed that editors are more inclined to modify pages that present opposing viewpoints to their own, suggesting that personal agendas are at play.

In 2018, the same team of researchers compared 4,000 Wikipedia articles on US politics to those in Encyclopaedia Britannica. Their findings were alarming: 73% of Wikipedia articles were biased, while only 34% of Britannica's articles showed similar issues.

Unfortunately, bias isn't just a personal issue—it extends to a much larger scale. For instance, 84% of Wikipedia's editors are male, more articles are written by Europeans than the rest of the world combined, only 16% of Sub-Saharan African topics are written by people from that region, and articles on the same subject can vary greatly depending on the language they're written in.

9. Giving Equal Attention to Unequal Opinions

One of Wikipedia's greatest strengths lies in its ability to allow anyone to contribute, attracting some of the brightest minds without offering any financial compensation. This open model is what underpins Wikipedia's success, enabling it to cover highly specialized topics in detail. However, while this approach helped the platform expand, it did so at the cost of overall quality.

William Connolley, a software engineer specializing in climate modeling, runs several climatology websites and served as the Senior Scientific Officer in the Physical Sciences Division of the Antarctic Climate and Earth System project until 2007. Despite this extensive expertise, his edits are treated the same as anyone else's.

The Wikipedia entries on all things climate, including ClimateGate, have been written by the very same person, William Connolley, who was heavily involved with the main players (Mann, Jones, etc.).

It’s like having a trial where only the defense gets to present its case. pic.twitter.com/U5eTX7FvB6

— Kenneth Richard (@Kenneth72712993) December 21, 2019

As you might have expected, Connolley eventually found himself embroiled in an edit war over the Climate Change page. He accused his opponent of downplaying scientific facts, while his rival claimed that Connolley was suppressing opposing views. Wikipedia ultimately sided with the rival, restricting Connolley to just one edit per day. His experience is often cited in the scientific community as a prime example of the issues with Wikipedia’s policies.

8. Inconsistent Fact-Checking

Whenever there are concerns about the accuracy of information on Wikipedia, defenders usually argue that these issues are corrected over time, often quite rapidly. You might think this is especially true for larger pages, where a larger audience would increase the chances of spotting errors. However, that’s not always the case.

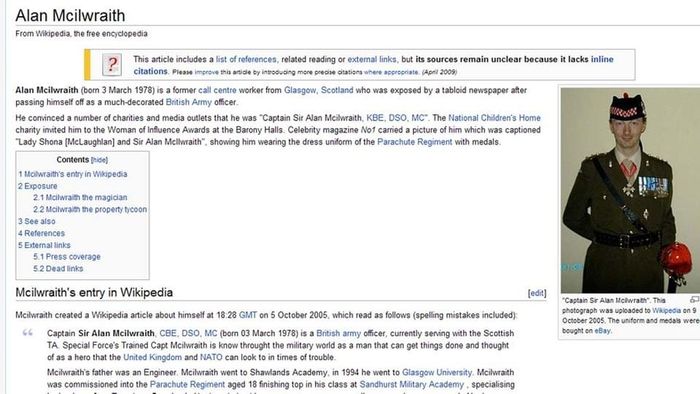

Some absurd misinformation is quickly corrected, such as the page of Alan Mcilwraith, who fabricated an entire story about his distinguished military career. Despite never serving in the army, Mcilwraith used this false background to infiltrate charity boards and commit identity theft by ‘recruiting’ others. However, Wikipedia wasn’t fooled by his claims of stolen valor.

Take Hillary Clinton, one of the most well-known and polarizing public figures in the world. You’d think that her page, which gets millions of visits daily, would undergo constant scrutiny for both positive and negative biases, and that errors would be quickly corrected. But that’s not what happened. For 20 months, her Wikipedia page incorrectly stated that she had been the Valedictorian speaker at her graduation, even though she wasn’t. This wasn’t a malicious lie, nor a scandalous mistake—it was just wrong. While this may seem like a minor issue, it underscores how even basic, easily verifiable facts can remain uncorrected for long periods on the site.

7. The Falsehood Spiral

But does it really matter if Wikipedia contains inaccuracies? If you're conducting serious research, you should be verifying information through multiple sources. The real problem now is that many sources themselves are drawing from Wikipedia. Take the case of John Seigenthaler, an American journalist whose Wikipedia page was altered to falsely claim he was a suspected assassin living in the Soviet Union. It's important to note that no government would ever place a spy among journalists (with Project Mockingbird being the only exception), clergy, or charity workers, since these groups are considered too vulnerable and often provide crucial aid. This makes an accusation of espionage against a journalist far more damaging than in most other professions.

Other websites like Answers.com and Reference.com began pulling these false claims and publishing them as well. The misinformation was only uncovered when a curious colleague Googled Seigenthaler’s name and immediately contacted him. After lengthy legal battles, Seigenthaler succeeded in getting the falsehoods removed, but was never told who made the edits. A determined internet sleuth eventually uncovered the editor's identity. The person responsible was fired and issued an apology, stating they thought Wikipedia was a joke. In turn, Seigenthaler successfully campaigned to have the dismissal overturned.

John Seigenthaler: “Wikipedia, WikiLeaks and Wiccans: Historical Accuracy Online”

Watch this video on YouTube

Watch video of John Seigenthaler, a nationally recognized advocate for the First Amendment also known for his …https://t.co/pakROYzM5y pic.twitter.com/Axf2ivQcy2

— 1BUV Moved to _1BUV (@_1BUV_moved) September 10, 2019

In another incident in 2008, a group of teenagers added 'Azid' as a synonym for 'Korma', a prank that was later claimed by a Reddit user to have been a way to bully an Arabic classmate. The entry remained on Wikipedia until 2014, by which time 'azid' had spread across thousands of websites as a legitimate term. In fact, the hoax was so successful that some even claim that 'azid' has always been a synonym for 'korma', which highlights just how influential revisions can be.

6. Background Performers

Given that biased individuals can freely post content without significant consequences, it raises the question of who these individuals truly are. While the site does offer some diversity of thought, it’s clear that this diversity is more limited than we might hope.

A study examining 250 million edits over a decade revealed that 77% of content is created by a mere 1% of editors. Out of approximately 132,000 active contributors, around 1,300 people are responsible for shaping the vast majority of the world's most frequented source of information.

This trend is even more pronounced in non-English language versions of the site. A famous case involves Sverker Johansson and his bot, Lsjbot, which together are responsible for creating between 80-99% of the content on the Swedish, Cebuano, and Waray language sites. While their work primarily focuses on geography, they also demonstrated the significant role bots can play in content creation. However, this reliance on bots presents its own issues, as the creators' biases influence what topics are covered and how. With so few individuals in control, and without proper accountability, the content inevitably reflects these limitations.

5. Spy vs Spy

As anticipated, nearly all major corporations and institutions hire people to make sure Wikipedia portrays them in a positive light. There’s been no shortage of unfavorable content being removed or altered, and it's often traced back to someone with a clear agenda. Political parties like the DNC and GOP have edited not just their own pages but also those of their rivals. In the UK, Chuka Umunna’s staff were accused of labeling him as the 'British Barack Obama,' while a mysterious editor spent 75% of their time flattering Grant Shapps and 25% attacking his adversaries.

Simultaneously, the CIA and FBI have become involved in topics like Guantanamo Bay and the Iraq War. Intelligence agencies from Britain, Australia, Israel, and even Sweden have been caught engaging in what is referred to as 'conflict of interest' bias on Wikipedia, employing people to make flattering edits on a full-time basis.

ICYMI from 2007: The CIA and FBI edit Wikipedia to their taste https://t.co/neFsJfZq4y

— Peter Roman (@TsarKastik) November 6, 2019

These same tactics have been observed in the private sector, with companies like Microsoft, BP, the Koch brothers, and the Church of Scientology all hiring paid employees to edit Wikipedia. While you may feel this is something we should have already known, with so many individuals being paid to edit, one has to wonder how many of the 1,300 top editors are truly authentic.

4. Fundraising

Once a year, without fail, Wikipedia users are greeted by a banner urging them to contribute to keep the site running for free. While this is perfectly reasonable, as no one is forced to donate, it’s worth noting that the site is known for not displaying advertisements. Despite having reserves of approximately $100 million, this shouldn’t raise concerns as they need to be prepared for unforeseen situations.

At first glance, the Wikimedia Fundraising Report seems to suggest everything is fine. Major donations account for only 12% of the total, indicating that large sums aren’t a pressing concern. However, the classification of a 'major' donation as anything above $1,000 is puzzling, especially for a charity that frequently receives donations of $1 million. This leads to a skewed perception of large donations, with the average figure significantly reduced. Only two large donors, Google and the Brin Wojcicki Foundation, are recognized for contributing over $1 million. Excluding these two reduces the average by 14%. Moreover, the inclusion of smaller donations distorts the average further, despite them being classified as major gifts.

Looking deeper into the data, the picture becomes even more opaque. Eight percent of donations are categorized as chapter donations, which means that even large donations fall under this classification. Another 8% comes from recurring donations, which are often used by companies to remain anonymous while keeping their contributions just under the reporting threshold. An additional 7% comes from the vague category labeled 'Other'.

3. Deceptive Claims

While much of the misinformation found on Wikipedia stems from honest mistakes, differing opinions, or attempts to present oneself favorably, some is intentionally false. A prime example is the case of Moose Boulder. You may have heard it referred to as 'the largest island in the largest lake on the largest island in the largest lake on the largest island in the largest lake in the world.' It took two individuals not only to travel to the supposed location of the island but also to track down the source of its only known photo before realizing it was entirely fabricated. The reasons behind its creation remain unknown.

These fabrications can be far more dangerous than simply creating fake islands. A prime example is when Wikipedia listed KL Warschau as a major Nazi extermination camp. In truth, it was a small concentration camp used mainly for forced labor. The article also falsely stated that 200,000 non-Jewish Poles were gassed there, bringing the total number of Poles killed in Poland to 400,000—the same number and method used for the Jews. This lie allowed the argument to grow that the Holocaust was exaggerated, with the Jews being blamed for distorting history. The page was repeatedly edited to remove references to Jewish victims.

To Wikipedia’s credit, they did take down the false information as soon as it was pointed out. Sadly, it took them 15 years to act.

2. Subtle Redirection

People argue that you can’t trust a tweet, and similarly, many claim you can’t trust Wikipedia. The problem is that people do trust it. It’s not as simple as writing it off as ‘their problem.’ Falsehoods on Wikipedia can spread to other websites, cookbooks, news outlets, and more, with a significant impact on scientific papers and the information they use.

Many scientific papers are written with student involvement. When students are assigned a topic, one of the first things they do is turn to Wikipedia for an overview, using it as a springboard to better sources. Therefore, the content found on Wikipedia—whether accurate or not—has a direct influence on scientific research from the very beginning.

This isn’t just a theory or guesswork. A research conducted by MIT students discovered that when a topic appears on Wikipedia, it can influence the creation of up to 250 scientific papers on that very subject. There’s a clear link between whether something is included in Wikipedia and whether it’s likely to show up in a scientific paper. Even scientists aware of this phenomenon find themselves caught in it, as looking for other sources may lead them to one that has already been influenced.

1. Revision with Context

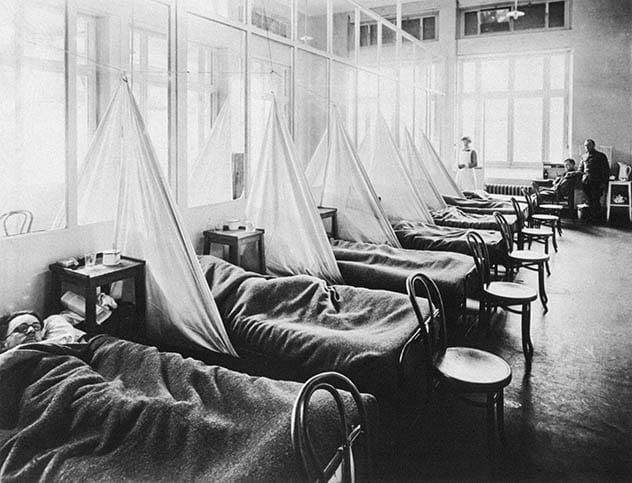

Last week, the Wuhan virus (Covid-19) had infected only one-fifth of the number of people as the Spanish flu. This week, however, the infection rate has risen beyond 100%. This surge isn’t due to a sudden spike in coronavirus cases, but because the count of those affected by the Spanish flu a century ago seems to have dramatically dropped in recent reports.

The Wikipedia page for Spanish flu initially stated, 'an estimated 10% to 20% of those infected died.' After the rise of coronavirus, this figure was updated to say, '2-3% of those infected died.' This number, while originating from a WHO report, wasn’t specifically related to the Spanish flu and lacks a clear citation. It's likely an oversight that’s been taken advantage of, given it’s the lowest estimate, conveniently aligning with coronavirus death rates. Consequently, news outlets are now frequently reporting that Covid-19 has claimed as many lives as the Spanish flu, but in a shorter time frame. This plays into the hands of those benefitting from the hysteria surrounding the Chinese virus.

https://t.co/0m6NHSpUQ1

— Otto von Shitpost? (@ottovonshitpost) March 13, 2020

The article still maintained other unchanged claims, such as the death toll of 50-100 million people and the assertion that one-third of the world’s population was infected at some point. If you attempt to reconcile these conflicting numbers and trace them back, you’ll realize that there were supposedly as many as 4 billion cases of Spanish flu—more than twice the global population at the time.