When you think about modern healthcare, various elements likely come to mind—a bustling operating room, or perhaps an ambulance rushing through traffic. Certain aspects of medicine have become so commonplace, it’s difficult to picture a time before them. But every innovation had its first occurrence. Here’s a list of ten pivotal advancements that have become staples in the evolution of modern medicine.

10. First Motorized Ambulance

Ambulances play a critical role in modern healthcare. In urgent situations, every second counts, so rapid transport of both patients and medical personnel is crucial. The first ambulances were drawn by horses, primarily used on battlefields to carry wounded soldiers to safety and treatment. By the 1860s, motorized ambulances were introduced for civilian use, capable of reaching emergency scenes in under thirty seconds after receiving a call.

Chicago was the first city to introduce the motorized ambulance in 1899, thanks to a donation from five hundred local business leaders. New York followed suit a year later, receiving its own fleet of motorized ambulances. These new vehicles offered a smoother ride, improved comfort, greater safety for patients, and were significantly faster than their horse-drawn predecessors. An article from the New York Times on September 11, 1900, detailed these ambulances, noting they had a range of twenty-five miles and could reach speeds of up to sixteen miles per hour.

In 1905, the three-wheeled Pallister ambulance made its debut as the first gasoline-powered ambulance. Four years later, James Cunningham, Son & Co.—known for making hearses—introduced the first mass-produced ambulance, powered by a four-cylinder internal combustion engine. Instead of modern sirens, this early version featured a gong. How fascinating is that?

9. First Use of Defibrillation

Defibrillators have become iconic in hospital dramas, where doctors dramatically shout 'clear!' before shocking a patient back to life. This device is a staple of modern medicine, known for its ability to restore a person’s heart rhythm during a life-threatening emergency. It works by delivering a shock that reestablishes the heart’s proper pace, though it doesn’t actually restart a stopped heart, as many people mistakenly believe. Today, automated defibrillators are often available in public places and can save lives, even when used by bystanders with no medical training.

The first successful defibrillation took place in 1947. Throughout the early twentieth century, experiments on dogs demonstrated that shocking a heart in ventricular fibrillation (VF) could be beneficial. Surgeon Claude Beck championed this technique. During surgery on a fourteen-year-old boy, the heart went into VF after being irritated by anesthesia. After forty-five minutes of manual heart massage, shocks from defibrillator paddles were applied, restoring the heart’s normal rhythm. The boy made a full recovery, and the defibrillator became a staple in medical practice.

8. First Indirect Blood Transfusion

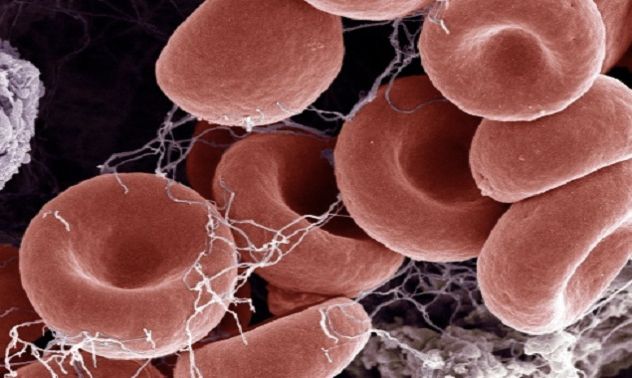

Blood transfusions are crucial for saving lives. While we often see them in action in emergency rooms, they’re also essential for life-saving surgeries and numerous medical treatments. Blood donations make a difference in a wide range of healthcare scenarios.

The practice of blood transfusion dates back to the seventeenth century, beginning with experiments between animals and later extending to human recipients. The early challenge was that blood outside the body would coagulate and solidify quickly, making human-to-human transfusion difficult. To overcome this, the donor had to give blood directly to the receiver, which presented practical challenges in the process.

The first recorded instance of blood donation that didn’t involve direct transfer from donor to recipient occurred in 1914, thanks to Belgian doctor Albert Hustin. He preserved the blood by mixing it with sodium citrate and glucose, which acted as an anti-coagulant.

A few months after Hustin’s breakthrough, Luis Agote in Argentina repeated the procedure. This method, known as 'citration,' made it possible to store donated blood for later use. Today, blood donation is a global effort, with ninety-two million people donating blood annually, helping to save millions of lives.

If you're eligible to donate blood, please consider doing so. For readers in the US, you can visit http://www.americasblood.org or look up local blood donation centers wherever you are. Your donation can save lives—and you can even read Mytour on your phone while doing it.

7. First Medical Journal

Medical journals are the cornerstone of how medical knowledge is disseminated and rigorously peer-reviewed. The longest-running medical journal still in publication is the New England Journal of Medicine, which began in 1812. Today, hundreds of thousands of scientific journals are published annually, many of which focus solely on medical topics.

The first-ever journal solely dedicated to medicine was published in French in 1679, while the inaugural English-language medical journal, titled 'Medicina Curiosa,' followed five years later.

The full title of Medicina Curiosa was 'Medicina Curiosa: or, a Variety of New Communications in Physick, Chirurgery, and Anatomy, from the Ingenious of Many Parts of Europe, and Some Other Parts of the World.' Its first edition was released on June 17, 1684, containing advice on treating ear pain and a grim story of death caused by the bite of a rabid cat. Unfortunately, the journal only lasted for two issues.

6. First Intravenous (IV) Needle

In the nineteenth century, cholera was one of Europe’s most dreaded diseases, sparking intense medical research and numerous advancements—some of which are explored later in this list. At the time, there was no known cure for cholera. As a bacterial infection, it took over a century—and the discovery of antibiotics—before an effective treatment was found. In the mid-1800s, care focused primarily on alleviating the symptoms. Cholera frequently killed its victims by causing extreme dehydration, as those affected could lose up to five gallons of diarrhea per day. Simply drinking water wasn't sufficient to rehydrate a person suffering from cholera.

Thomas Latta, like many physicians of his era, became deeply involved in the discussions surrounding cholera. A doctor named W. B. O’Shaughnessy had theorized about the use of intravenous injections but had never put it into practice. Inspired by this, Latta decided to give it a try. Reflecting on his decision, he wrote, “I at length resolved to throw the fluid immediately into the circulation. In this, having no precedent to direct me, I proceeded with much caution.”

Latta injected six pints of fluid into an elderly woman suffering from cholera and witnessed an astonishingly swift improvement. Unfortunately, she passed away several hours later after being handed over to a hospital surgeon who failed to replicate the procedure. Despite this, many doctors took note of the potential of intravenous fluid therapy, and it quickly gained traction. It wasn’t until the late nineteenth century that the full potential of intravenous fluids was recognized; today, the IV drip is a staple of modern medicine, saving lives even in the most critical cases.

5. First Powder-Based Pills

Nearly everyone has taken a powdered pill at some point in their life. Billions of pills are manufactured every year, and a single factory can produce them in the billions.

Although pills in some form have existed for thousands of years, they were often little more than ground-up plant material. In the early 1800s, efforts to create pills from specific chemicals ran into several challenges. Coatings would frequently fail to dissolve, and the moisture required for pill creation often rendered the active ingredients ineffective.

In 1843, English artist William Brockedon was facing similar challenges with graphite pencils. He invented a machine capable of pressing graphite powder into solid blocks, creating high-quality drawing tools. A pharmaceutical manufacturer saw potential in this technology, and Brockedon’s invention was soon adapted to create the very first powder-based pills. This process was then scaled for mass production by the century's end, leading to the pill-dominated world we know today.

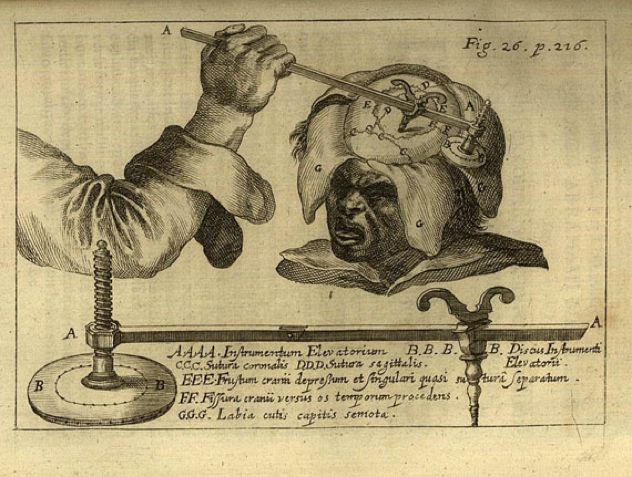

4. First Surgery Under General Anesthesia

Modern surgery is capable of remarkable accomplishments, but these feats are only made possible through anesthesia. The ability to render a patient immobile and free from pain is crucial in almost all surgical procedures. The first well-documented instance of surgery performed under general anesthesia occurred in 1804, thanks to Japanese physician Seishu Hanaoka.

Hanaoka administered a plant-based mixture to a sixty-year-old woman with breast cancer. Known as tsusensan, this concoction contained several active ingredients that rendered the patient pain-free after two to four hours, ultimately causing her to lose consciousness for up to a full day.

While this early anesthetic was not as safe as today's options, it proved effective, allowing Hanaoka to perform a partial mastectomy. He continued to use this mixture in many surgeries. By the time of his death over thirty years later, he had performed more than one hundred and fifty breast cancer surgeries, at a time when the idea was just beginning to gain recognition in the Western world.

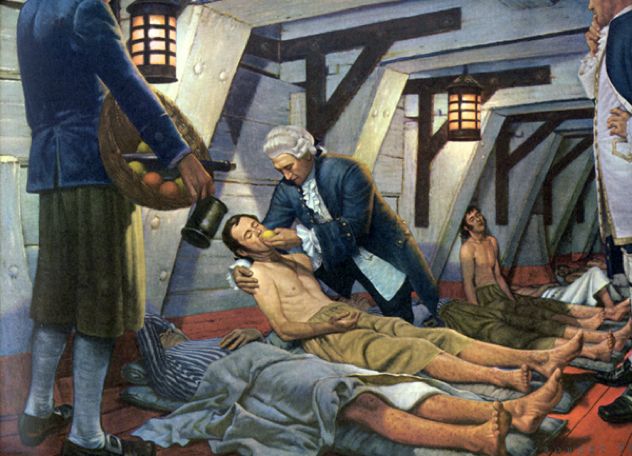

3. First Clinical Trial

Clinical trials are essential for determining what treatments are effective and which ones are not. In comparison, other forms of evidence like personal stories or expert opinions are less reliable. You can't trust a story that claims a drug worked for someone, as it may simply be coincidental, and they could have recovered without the drug's help.

The only reliable way to prove that a medical intervention works—beyond a mere placebo effect—is to test it on groups of people and observe if there's a consistent improvement among those receiving the treatment. This principle forms the backbone of nearly all modern medical research.

The very first clinical trial took place on a ship. Twelve sailors suffering from scurvy were split into six pairs, each receiving a different remedy. One group tried vinegar, another tried seawater, but the real breakthrough came when one group was given oranges and lemons. We now know that scurvy is caused by a deficiency in vitamin C, which is why the citrus group saw such dramatic improvements. Within just six days, the two sailors who received the fruit had nearly recovered; one even returned to work, while the other was assigned to care for the remaining patients due to his much-improved health.

James Lind, the doctor who conducted the experiment, famously concluded: 'I shall here only observe that the result of all my experiments was that oranges and lemons were the most effectual remedies for this distemper at sea . . . perhaps one history more may suffice to put this out of doubt.' His findings not only proved true for scurvy, but in the process, he unknowingly laid the foundation for modern medical experimentation—a core method still used in research today, nearly three centuries later.

2. First Use of Epidemiology

Epidemiology is the scientific study of disease patterns within populations. By employing both observation and statistical methods, epidemiologists identify trends, causes, and solutions for the prevention or treatment of various illnesses. This field serves as the foundation for public health policies. The term is derived from 'epidemic' and initially referred specifically to outbreaks of disease, but it has since broadened in scope.

Dr. John Snow, often hailed as the 'father of epidemiology,' is most renowned for his groundbreaking application of epidemiological principles. In the 1800s, the prevailing belief was that diseases such as cholera were caused by miasmas—noxious gases in the air. However, Snow, a proponent of germ theory (which was not widely accepted at the time), argued that cholera might be spread through contaminated water, which he described as potentially harboring a 'poison.'

During the cholera epidemic in London in the 1850s, Snow carefully studied the water sources of the victims. His investigation revealed that many of the deceased had consumed water from a source contaminated with sewage.

Despite initial skepticism from critics, Snow persisted. When another cholera outbreak struck, he mapped the locations of the victims and discovered a significant cluster near a specific water pump. Interestingly, many individuals who didn’t live near the pump recalled drinking from it. In contrast, nearby neighborhoods with separate water sources remained unaffected. Snow persuaded officials to remove the pump handle, and the outbreak soon subsided. Unfortunately, the miasma theory continued to dominate scientific thought for some time thereafter.

Snow found it difficult to convince all but a small handful of people about the accuracy of his theory. He passed away from a stroke in 1858, mostly unrecognized for his groundbreaking contributions. It wasn't until decades later that his work was rediscovered, leading to the establishment of an entire scientific field and securing his rightful place in medical history.

1. First Vaccination Program

We’ve previously highlighted the significance of the invention of vaccination and the incredible accomplishments it has enabled. One crucial element of vaccination is the concept of herd immunity—ensuring a sufficient number of people are immunized to halt the spread of diseases in a community. This not only protects the vaccinated but also shields those who cannot be immunized, such as infants or cancer patients. Achieving herd immunity typically requires a high vaccination rate, often over ninety-five percent of the population. Convincing so many people to take action requires a substantial effort, which is why many vaccination programs are enforced by governments.

In 1840, the United Kingdom enacted the 'Vaccination Act,' providing free vaccinations to the poor. By 1853, vaccination became mandatory for all babies under three months old, and by 1867, it was required for all children under fourteen to be vaccinated against smallpox. Those who failed to comply with the law could face fines or even imprisonment.

Although the use of mandatory vaccination played a significant role in eradicating smallpox, it also sparked the rise of the anti-vaccination movement. Many people viewed the requirement as an infringement on personal freedom, leading to riots across various parts of the UK. In 1898, the laws were adjusted to allow individuals to opt out on conscientious grounds. Nevertheless, the positive impact of vaccination programs on public health remains strongly backed by evidence.