Machines are rapidly advancing. They've reached a stage where they can teach themselves and make decisions autonomously. The results can be rather unsettling. Some machines dream, interpret thoughts from people’s brains, and even evolve into masters of art.

The more ominous capabilities are enough to prompt the creation of anti-AI devices, which are currently under development. Some AI systems exhibit traits of mental instability and bias, while others are deemed too hazardous to be released publicly.

10. Deepfakes

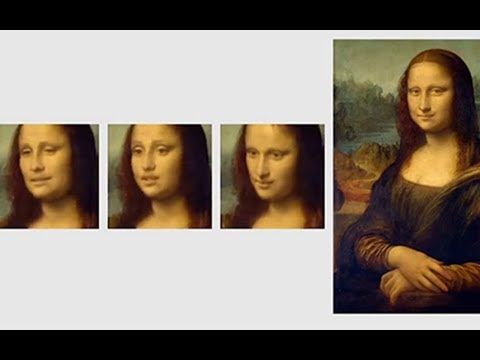

In 2019, a video surfaced on YouTube. It featured various segments of the iconic Mona Lisa, but this time, the painting was disturbingly animated. The woman’s head moved, her gaze shifted, and her lips silently engaged in conversation.

The Mona Lisa appeared more like a movie star being interviewed than a mere painting. This is a prime example of a deepfake. These animated portraits are generated using convolutional neural networks.

This type of AI interprets visual data in a manner similar to the human brain. It required immense effort to train the AI on the intricate details of human facial features, enabling it to transform a static image into a lifelike video.

The system had to first comprehend how facial features moved, a complex task in itself. As one researcher put it, a 3-D model of a face contains “tens of millions of parameters.”

The AI also gathered data from three real-life models to generate the videos. This explains why the woman resembles the Mona Lisa, but each video still carries subtle traces of the models’ distinct personalities.

9. AI Exhibits Bias

Combating prejudice is a difficult task. Anyone who has faced discrimination due to their appearance or beliefs can attest to this. Recently, a new dimension of this issue has emerged in the realm of AI.

Not long ago, researchers created a word-association test for GloVe (Global Vectors for Word Representation). This AI tool is commonly used for word representation tasks. By the end of the experiment, even the scientists were taken aback.

They discovered every form of bias they could test within GloVe. Among the findings, it associated more negative terms with the names of African-Americans and older individuals, while linking women more with family-related terms than with careers.

The reality is that society often subjects certain groups to more hardship—and AI systems reflect that. The key difference is that humans can recognize fairness and opt not to discriminate against others; computers lack this capacity.

8. It Sleeps Like a Human

Sleep enhances cognition and rejuvenates the body. Studies also suggest that sleep helps neurons eliminate unnecessary memories formed during the day. This process is vital for brain health and might explain why sleep improves cognitive abilities.

In 2019, researchers embedded the concept of sleep into an artificial neural network (ANN) called a Hopfield network. Modeled after the sleeping mammalian brain, the ANN stayed 'awake' when online and went 'offline' to sleep.

Going offline didn’t mean the AI was powered down. By mimicking human sleep patterns mathematically, it experienced something akin to REM (rapid eye movement) and slow-wave sleep. REM is thought to consolidate significant memories, while slow-wave sleep eliminates irrelevant ones.

Remarkably, the ANN seemed to experience something like dreaming. While offline, it replayed everything it had learned throughout the day, waking up with enhanced memory capacity. When deprived of its 'nap,' the ANN's learning ability was drastically reduced, resembling the cognitive decline of a sleep-deprived human. This breakthrough could one day make sleep a compulsory feature for all AI systems.

7. Anti-AI AI

Technology is advancing at an unprecedented rate, and one of the most alarming predictions suggests that our existence could be at risk from rogue AI. Not just any AI, but highly advanced, self-evolving systems.

In 2017, researchers from Australia managed to develop an anti-AI device in just five days. This device was designed to address a growing issue—AI systems masquerading as real humans. The wearable prototype alerts its wearer if they come across an artificial impostor.

The prototype studied synthetic voices and uses Google's Tensorflow, a machine learning software. When activated, the device captures all nearby voices and sends the data to Tensorflow for analysis.

When the system detects a human voice, it remains silent. But if it hears a synthetic voice, it sends a chill down the listener’s spine. The creators chose a subtle 'clone alert'—instead of a sound, a thermoelectric element cools the back of the neck.

6. Ai-Da

Ai-Da, a robot that’s taken the art world by storm, may be a nightmare for struggling artists. This machine produces intricate paintings, sculptures, and sketches. What makes it so extraordinary is that Ai-Da is teaching itself ever more complex and creative methods of expression.

Ai-Da, based in Oxford, is especially renowned for its works in the niche of shattered light abstraction, which are comparable to paintings created by some of the best abstract artists of today.

The machine has a variety of talents. It can talk, walk, and even hold a paintbrush or pencil. Notably, it became the first AI to hold its own art exhibition, with pieces that have been described as 'hauntingly beautiful.' The artworks explore themes such as politics and offer tributes to famous figures.

Ai-Da was 'born' in 2017, after being commissioned by art curator Aidan Meller. To bring the robot to life, Meller collaborated with a robotics firm in Cornwall and engineers in Leeds, who created the machine's brilliantly designed hand. What's fascinating is that even those who built it can't predict what Ai-Da will produce next or its true creative potential.

5. Facial Prediction From Voice Clips

In the future, making an anonymous phone call could become a thing of the past. There's an AI system that listens to a brief voice recording and then predicts what the person looks like.

Trained at the Massachusetts Institute of Technology (MIT), the AI learned to create facial drawings using only voices by consuming online videos. While the results were initially rough, they still bore a striking resemblance to the individuals they represented. In fact, the likenesses were so uncanny that they made the MIT team uneasy.

As with many new technologies, there's a risk that the voice-to-face AI could be misused. For example, someone participating in a phone interview might face discrimination based on their predicted appearance. On the other hand, this AI could also aid law enforcement by generating images of people making threatening calls, such as stalkers or kidnappers demanding ransom.

MIT is committed to maintaining an ethical approach in the training and future use of the AI system.

4. Norman

At MIT’s Media Lab, there exists a neural network like no other. Named Norman, this AI is truly unsettling. Unlike typical systems designed to make advancements in public sectors, Norman’s ‘mind’ has been consumed by dark thoughts, which are violent and disturbing in nature.

The researchers discovered this abnormality when they subjected the AI to inkblot tests. These tests, used by psychologists to gain insight into a person's mental state, revealed something shocking. While other AI systems saw harmless images, such as a bird and an airplane, Norman’s interpretation was much darker—he saw a man who had been shot and discarded from a car. Even more gruesome was his response to another inkblot, which he described as a man being pulled into a dough machine.

This abnormal behavior has been compared to the signs of mental illness, with some scientists even labeling it as ‘psychotic.’ However, the same elements that led Norman astray could potentially guide him back to a healthier state. Neural networks learn and refine their decisions with more data, so MIT decided to open an inkblot survey to the public, hoping Norman would correct himself through human feedback.

3. AI Is Turning Self-Aware

While artificial networks exhibit impressive skills, self-awareness remains a distinctly human characteristic that AI has long struggled to achieve. However, in 2015, that line began to blur. A robotics lab in New York put three off-the-shelf Nao robots to the test.

These small humanoid robots were told that a pill had silenced two of them. In reality, no one received any medication; instead, two of the robots were muted by a human pressing a button. The task was for the robots to figure out which one was still able to speak, but they all failed, responding with, 'I don’t know.'

It wasn’t until one robot realized it could still talk that the breakthrough came. It excitedly announced, ‘Sorry, I know now! I was able to prove that I wasn’t given a dumbing pill.’

The robot succeeded in the test, which, despite seeming simple, was far from easy. The Nao had to understand the human question, 'Which pill did you receive?' Upon hearing its own response, 'I don’t know,' it had to recognize that it was speaking its own realization that no pill had been administered.

This marked the first occasion that AI successfully solved the so-called wise-men puzzle, a test designed to measure self-awareness.

2. AI Too Dangerous For The Public

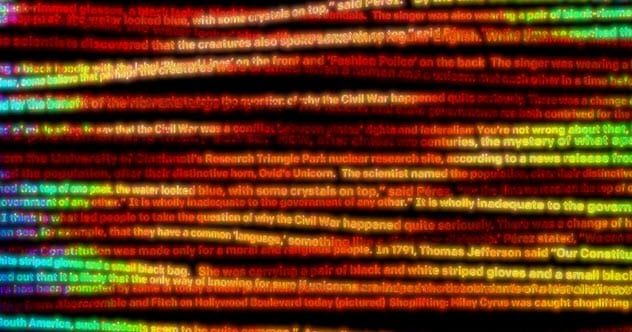

Its name might be uninspiring, but GPT-2 is considered so dangerous that its creators chose not to release the full version to the public. GPT-2 is a language prediction system. While it may sound mundane, in the wrong hands, this AI can be incredibly destructive.

GPT-2 was designed to generate original text based on a written example. Though not flawless, the AI’s output was often so convincing that it could produce realistic news articles, write essays on the Civil War, and even craft its own tales with characters from Tolkien’s The Lord of the Rings. All it required was a prompt from a human, and the creative process would begin.

In 2019, OpenAI, the nonprofit organization behind this cutting-edge AI, announced that only a limited version would be available for purchase. The organization expressed concerns that the system could exacerbate the fake news crisis, manipulate social media users, and impersonate real people.

1. AI Reads Words Inside The Brain

In 2018, three separate studies taught AI to translate brain waves into words. This required electrodes to be directly connected to the brain, a procedure not allowed for healthy individuals due to ethical concerns.

The scientists gained consent from volunteers who had undergone brain surgery for unrelated reasons. As some participants listened to audio files and others read aloud, a computer recorded their brain activity.

In the first study, the AI was trained using 'deep learning,' a method where a computer tackles problems with minimal human guidance. After the brain signals were converted into speech using a voice synthesizer, the words were accurately identified approximately 75 percent of the time.

The second study employed a combination of patterns produced by neuron activity and speech sounds to train the AI. The generated audio had a clarity comparable to a microphone recording.

For the third study, a different technique was used—focusing on the brain region responsible for turning speech into muscle movements. Listeners were able to comprehend the AI's speech 83 percent of the time.