While we deeply respect medical professionals for their expertise and their role in helping us combat various ailments, it's easy to overlook that doctors are human and just as prone to errors as anyone else. This was particularly evident in earlier times, when the diseases plaguing humanity led to some truly unusual and outlandish explanations from medical practitioners.

10. Illnesses Were Believed to Spread Through Night Air

During the Middle Ages, the miasma theory emerged, suggesting that diseases like cholera, Chlamydia, and the Black Death were caused by 'bad air' originating from decomposing organic material. This air was believed to be particularly harmful near swamps and at night, prompting people to stay indoors and keep their windows firmly closed.

In 1776, John Adams and Benjamin Franklin, two notable American figures, shared a room at a busy inn during their travels. Adams, who was unwell and fearful of the night air, closed the window. Franklin, however, persuaded him to reopen it. Adams' belief in the harmful nature of night air, despite his education and eventual presidency, highlights how widely accepted the miasma theory was, even among the educated elite. Doctors and scholars supported this theory for more than a century.

While the reasoning behind it was incorrect, keeping windows closed did offer some health benefits. It helped prevent malaria, reduced exposure to autumnal fever-causing toxins, and minimized moisture that could chill the body.

By the latter half of the 19th century, the miasma theory was superseded by the germ theory.

9. Epilepsy Attributed to Divine Intervention

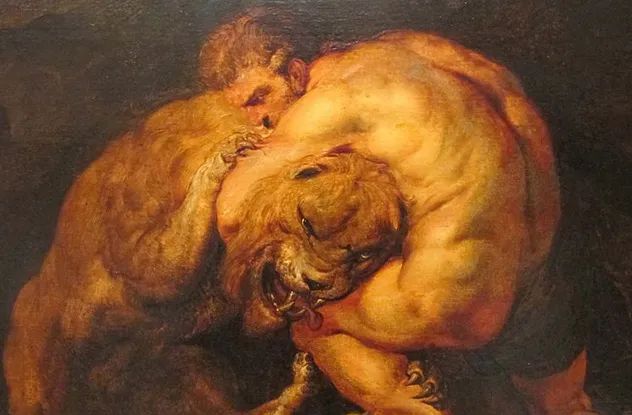

The ancient Greeks believed epilepsy, derived from the Greek word epilambaneim meaning 'to seize, possess, or afflict,' was caused by divine intervention. Often referred to as the 'sacred disease,' epilepsy had multiple names in Ancient Greece, including 'seliniasmos,' 'Herculian disease' (linked to the demigod Hercules), and 'demonism.'

Epilepsy was viewed as a form of miasma—a harmful or polluted 'bad air'—that affected the soul. It was seen as divine retribution for sinners and associated with Selene, the Moon goddess, as it was believed those who angered her would suffer from the condition.

The Greeks linked epilepsy to various gods based on the symptoms exhibited during seizures. For instance, if the person ground their teeth, it was attributed to Cybele, the nature goddess. If the sufferer screamed like a horse, Poseidon, the god of the sea and horses, was blamed. Treatments involved purification rituals and the chanting of healing verses.

8. Leprosy Attributed to Divine Punishment

During the Middle Ages, leprosy was widely believed to be a form of divine retribution. Those afflicted were thought to be suffering due to their sinful behavior and moral failings. This belief was reinforced by biblical stories where leprosy was depicted as a punishment from God. The disease was seen as both a physical ailment and a spiritual affliction, making lepers feared not only for their contagious condition but also for their perceived moral corruption, which the virtuous feared might spread.

Consequently, lepers endured harsh treatment in medieval times. They were ostracized, often required to wear bells to alert others of their presence, and sometimes even participated in their own funeral services, symbolizing their social death and exclusion from the community.

7. Colds Attributed to Accumulated Waste

Hippocrates, the ancient Greek physician often hailed as the father of medicine, was the first to challenge the notion that diseases were punishments from angry gods. He argued that illnesses stemmed from natural, earthly causes. His teachings were so impactful that doctors historically took the Hippocratic Oath, pledging to adhere to strict ethical principles.

Despite his groundbreaking ideas, Hippocrates also proposed some unusual theories, including the belief that colds resulted from excess waste material in the brain. He claimed that when this waste overflowed, it caused a runny nose. This theory gave rise to the Greek term catarrh, meaning 'flow,' which is still used in modern English to describe nasal discharge.

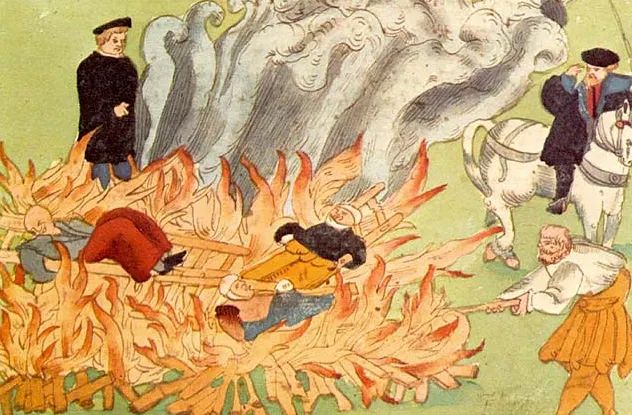

6. Mental Illness Linked to Witchcraft

During the Middle Ages, individuals with mental disorders were often believed to be cursed by witches, wizards, or possessed by demons. Exorcism was the most common treatment for such conditions. By the Renaissance, the preferred 'treatment' involved burning the body to supposedly save the soul trapped within.

In both the Middle Ages and the Renaissance, witches and demonic possession were blamed for many of humanity's misfortunes. Women were disproportionately accused of witchcraft, as they were thought to be more susceptible to demonic influence due to their perceived weaker nature. The uterus was considered a source of evil, and women were believed to be filled with venom during menstruation, which could allegedly spread contamination to others.

It was also thought that imagination could induce physical changes in the body, making it another form of witchcraft. The uterus was believed to absorb pathological images that could not be controlled, while the spleen was considered the primary source of such imaginative processes. Since women had both the uterus and the spleen as potential sources of pathological influence, they were seen as more powerful than men, who could only channel such effects through their spleen.

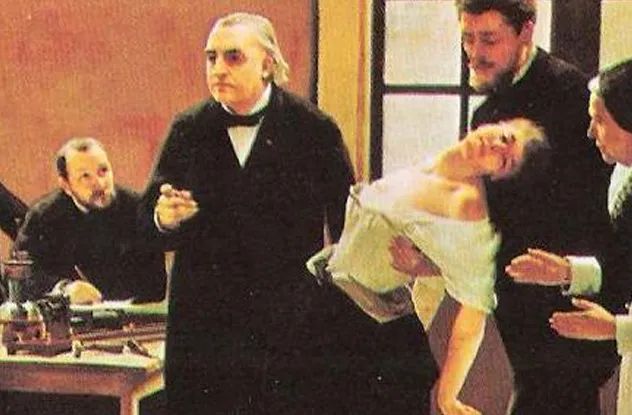

5. Hysteria Attributed to a Migrating Womb

In Ancient Greece, women experiencing mental illness were often diagnosed with hysteria, which Hippocrates attributed to a wandering womb. The Greek physician Aretaeus described the womb as capable of moving in all directions—up, down, left, and right. For instance, an upward shift was said to cause lethargy, weakness, and dizziness, while a downward shift could lead to choking sensations, loss of speech, and even sudden death.

To treat a wandering womb, doctors used pleasant fragrances like honey near the vagina to attract the womb back to its proper place. Conversely, foul odors were applied to repel the womb from the upper body. Other remedies included chewing garlic cloves, alternating hot and cold baths, regular sexual activity, and frequent pregnancies to keep the womb occupied and prevent it from wandering.

4. Porphyria Interpreted as Vampirism

Numerous myths about vampires originated in the Middle Ages. Today, it is thought that a rare genetic disorder called porphyria might have inspired these strange stories about 'creatures of the night,' rather than just the overactive imaginations of medieval peasants.

During the Middle Ages, limited scientific and medical understanding led to the misinterpretation of porphyria's symptoms as supernatural. Patients with porphyria are highly sensitive to sunlight, often avoiding outdoor exposure. When exposed, the sun can cause severe disfigurements to their hands, feet, or face, sometimes resulting in mutilated or distorted features. Noses, ears, or lips may recede or detach, and excessive hair growth can give them a wolf-like appearance, potentially inspiring werewolf legends.

Porphyria can also lead to erythrodontia, a red discoloration of teeth, and receding gums that may resemble fangs. Garlic, known to repel vampires, exacerbates porphyria symptoms, causing pain and illness in patients.

Modern treatments for porphyria include injections of a blood product called 'heme.' In the Middle Ages, lacking such treatments, sufferers might have instinctively sought heme by biting others and drinking their blood. Siblings sharing the defective gene could unknowingly trigger the disease in each other through such actions, potentially fueling the myth that a vampire's bite turns victims into vampires.

3. Birth Defects Linked to Maternal Impressions

The theory of maternal impressions suggested that a pregnant woman's fears, desires, or intense emotions could profoundly influence her child's physical traits. This idea was widely accepted in the 18th century and often used to account for birth defects. For instance, a child born deaf might be attributed to the mother being startled by a loud noise during pregnancy. To ensure a cultured and healthy child, pregnant women were encouraged to engage in positive activities like visiting galleries and attending concerts.

The concept of maternal impressions dates back centuries, not just the 18th century. The ancient Greek physician Galen believed that a pregnant woman gazing at an image could result in her child resembling that person. As a result, mothers were encouraged to look at statues they admired to ensure their children were attractive.

It was also thought that a pregnant woman's mental state could cause vascular birthmarks and even determine their shape and location. For example, if a woman craved or consumed many strawberries during pregnancy, her child might have a strawberry-shaped birthmark.

The maternal impressions theory persisted through the Middle Ages, the Renaissance, and the 18th century. Although physician William Hunter challenged it in the mid-18th century, many continued to believe in its validity. The theory remained popular until the late 19th century, when it was finally discredited and abandoned.

2. Autism Attributed to Absence of Parental Affection

Autism was first identified by child psychiatrist Leo Kanner in a 1943 paper. Beyond describing the schizophrenia-like traits in children, Kanner heavily emphasized the role of parents in contributing to the condition.

Kanner studied a small group of children from educated families and concluded that their parents, though highly intelligent, were emotionally distant and formal. He argued that autistic children were raised in emotionally cold environments, lacking warmth from either parent. He even suggested that these parents were 'just happening to defrost enough to produce a child.' Kanner wasn't alone in blaming parents; psychoanalysts like Bruno Bettelheim also emphasized parental responsibility, leading to the 'refrigerator mother' theory. During the 1950s and 1960s, parents of autistic children not only faced the challenges of raising them but also carried the guilt of supposedly causing their condition.

In the early 1960s, the refrigerator theory faced criticism as parents of autistic children began to challenge it. Although Kanner eventually retracted his initial stance, figures like Bruno Bettelheim continued to support it. By the 1970s, the theory was largely abandoned, though a few adherents remain in Europe and places such as South Korea.

1. Ulcers Linked to Stress

William Brinton was among the first to describe stomach ulcers in 1857, but limited diagnostic tools made detection challenging. With no identifiable germ or causative agent, doctors turned to psychological and environmental factors. Poor diet, smoking, and stress were blamed for increasing acid levels, leading to ulcers. Doctors Arvey Rogers and Donna Hoel noted that 'a peptic ulcer was once a mark of success. Ambitious professionals were expected to develop one, and if they didn’t, perhaps they weren’t working or worrying hard enough.' Treatment typically involved antacids and lifestyle changes.

Patients with severe ulcers often became critically ill, requiring stomach removal or succumbing to fatal bleeding. Alarmed by these outcomes, physician Barry Marshall and pathologist Robin Warren collaborated in 1981 to uncover the true cause of ulcers. Two years prior, Warren had identified an overgrowth of Helicobacter pylori bacteria in the gut. By examining ulcer patients and culturing samples, Marshall linked ulcers (and stomach cancer) to this bacterial infection, proposing antibiotics as the cure.

Skepticism persisted until Marshall, unable to test on mice or humans, ingested Helicobacter pylori himself. Within days, he developed gastritis, an early stage of ulcers, experiencing nausea, fatigue, and vomiting. He later biopsied his own gut, cultured the bacteria, and conclusively demonstrated that ulcers were caused by Helicobacter pylori, not stress.