Could an excessive dependence on robots result in unforeseen dangers? Explore more images of virtual reality.

Paul Gilham/Getty Images

Could an excessive dependence on robots result in unforeseen dangers? Explore more images of virtual reality.

Paul Gilham/Getty ImagesWhat will the world look like in 10 years? In 40 years? Will Moore's Law's progression eventually pave the way for a world governed by autonomous robots? Will we have triumphed over climate change and come together in celebration as we move closer to the much-anticipated singularity? Some futurists, those who specialize in this type of forecasting, have ventured these predictions, but others argue that these projections are flawed. In this article, we will explore some widely held beliefs about the future of technology that are likely to be myths.

Predicting future trends, particularly in a fast-evolving field like technology, is inherently uncertain, but informed speculation is possible. Of course, it is also possible to take a different stance on the reality of some of these technologies, but there is enough evidence available, especially from experts, to label them as myths.

Let's begin with one of the most iconic myths of the post-industrial era: the flying car.

5: The Day When Everyone Will Be Driving Flying Cars Is Coming Soon

The Skycar M400, designed for vertical takeoff and landing much like the Harrier Jet, will have an initial price tag of around $1 million.

Photo courtesy Moller International

The Skycar M400, designed for vertical takeoff and landing much like the Harrier Jet, will have an initial price tag of around $1 million.

Photo courtesy Moller InternationalThe flying car has been a vision for decades. It's seen as one of the pinnacles of a futuristic, utopian world where people can soar through the skies and land with ease, quietly and safely, wherever they choose to go.

You’ve likely seen videos showcasing flying-car prototypes, taking off, hovering, and occasionally crashing. However, the first "autoplane" was introduced back in 1917, and since then, many similar attempts have followed. Henry Ford predicted that the flying car would arrive in 1940, but numerous false alarms have happened since.

As we enter the second decade of the 21st century, it seems we’re no closer to the flying car reality, despite what gadget blogs may suggest. With funding drying up, NASA scrapped its contest for inventors to create a "Personal Air Vehicle," and no government agency, except possibly the secretive DARPA, seems willing to take on the challenge.

There are simply too many hurdles standing between us and a widespread flying car. Cost, air traffic paths and regulations, safety concerns, potential for misuse in terrorism, fuel efficiency, the need for pilot/driver training, landing, noise pollution, and opposition from the automobile and transportation sectors all make a legitimate flying car unfeasible. Additionally, these vehicles would likely need to function as cars on conventional roads, adding yet another obstacle.

In reality, many of the so-called flying cars currently being marketed as the real deal are merely roadable aircrafts – hybrid vehicles that combine elements of both planes and cars, but they’re not practical for everyday uses like driving kids to school. Furthermore, these vehicles are prohibitively expensive. For example, the Terrafugia Transition, slated for release in 2011 or beyond, is expected to cost $200,000.

4: The Technological Singularity Is Coming

Ray Kurzweil is a leading figure in the singularitarian movement, believing that the singularity could usher in a utopian era.

Gabriel Bouys/AFP/Getty Images

Ray Kurzweil is a leading figure in the singularitarian movement, believing that the singularity could usher in a utopian era.

Gabriel Bouys/AFP/Getty ImagesIn recent years, futurists like Ray Kurzweil have suggested that the singularity might be closer than we think, possibly arriving by 2030. There are varying ideas of what the singularity will entail. Some believe it’s the emergence of a true artificial intelligence that can rival human thought and creativity, where machines will not only surpass human intelligence but also become the dominant species on Earth, capable of creating even more advanced machines. Others argue it will involve such a dramatic leap in computing power that humans and machines will merge into something entirely new, perhaps through uploading our minds into a shared neural network.

Critics of the singularity, such as writer and academic Douglas Hofstadter, dismiss these ideas as "science-fiction scenarios" that remain speculative. Hofstadter argues that these concepts are too vague to be useful in discussions about what defines humanity and our evolving relationship with technology [source: Ross]. Moreover, there is little evidence to support the imminent arrival of the "tidal wave" of technological innovation that Kurzweil and other futurists predict [source: Ross].

Mitch Kapor, former CEO of Lotus, described the singularity as "intelligent design for the IQ 140 crowd" [source: O'Keefe]. One magazine even called it "the Rapture of the geeks"—not exactly a flattering comparison [source: Hassler]. Computer scientist Jeff Hawkins argues that while we may create machines of immense intelligence—far superior to anything we have today—true intelligence is rooted in "experience and training," not just sophisticated programming and processing power [source: IEEE].

Skeptics often point to the many sci-fi predictions from the past that still remain unfulfilled, such as the absence of moon bases or artificial gravity, to argue that the singularity is just another far-fetched dream. They also claim that understanding consciousness is beyond our grasp, let alone replicating it in machines. Additionally, the arrival of the singularity is heavily reliant on Moore's Law, which, as we explore later, could be at risk. (It’s worth mentioning that even Gordon Moore himself does not believe in the singularity [source: IEEE].)

3: Moore's Law Will Always Hold True

The real cause of the end of Moore's Law may be economic rather than scientific.

Lucidio Studio/Getty Images

The real cause of the end of Moore's Law may be economic rather than scientific.

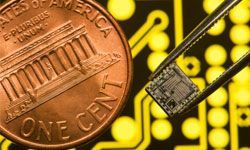

Lucidio Studio/Getty ImagesMoore's Law is typically understood to mean that the number of transistors on a chip—and thus its processing power—doubles every two years. However, Gordon Moore, the computer scientist who first proposed Moore's Law in 1965, was actually discussing the economic challenges of chip production, not the scientific breakthroughs driving advances in chip design.

Moore believed that the cost of chip production would decrease by half every year for the next decade, but warned that this trend might not be sustainable beyond that [source: Hickins]. The end of Moore's Law could thus be determined by economic factors rather than scientific limitations.

Many leading computer scientists have argued that Moore's Law will not last more than two decades [source: IEEE]. Why is Moore's Law likely to fail? Because as transistors shrink in size, the cost of producing them has risen significantly.

One analyst has forecasted that by 2014, transistors will shrink to 20 nanometers, but that any further reductions in chip size will become too costly for large-scale production [source: Nuttall].

For context, as of the summer of 2009, only Samsung and Intel had made significant investments in producing 22-nanometer chips.

Building the factories that produce these chips requires billions of dollars. For example, Globalfoundries' Fab 2 factory, which will begin production in New York in 2012, will cost an astounding $4.2 billion to construct. Only a handful of companies have the financial muscle for such ventures, and Intel has stated that a company must generate $9 billion in annual revenue to remain competitive in the high-end chip market [source: Nuttall].

The same analyst mentioned earlier believes that companies will continue to maximize the potential of existing technologies before making the costly switch to smaller, more advanced chip designs [source: iSuppli]. Therefore, while the end of Moore's Law may slow the pace at which we add transistors to chips, it doesn't necessarily imply that progress will halt in creating faster and more advanced computers through other innovations.

2: Robots Will Be Our Friends

While we're not likely heading toward a Skynet-like disaster, many scientists are growing increasingly concerned about whether proper safeguards are being established to protect us from the robots and digital systems we create.

One of the biggest concerns revolves around automation. Will military drones eventually be granted the authority to independently decide whether or not to strike a target? If a human operator is involved, will they still be able to overrule the drone’s decision? Will machines be allowed to self-replicate without human supervision? And will self-driving cars become a reality? (In fact, some vehicles already offer features like self-parking or lane departure warnings.)

Another issue is robots taking on roles they might not be suited for. There are already prototype medical robots designed to question patients about their symptoms and offer advice, even mimicking human-like emotions — a role traditionally filled by doctors. Microsoft has also implemented a video-based AI receptionist in one of its buildings. Furthermore, a new class of 'service robots' can plug into electrical outlets and handle menial tasks, alongside long-standing creations like the Roomba, an automated vacuuming robot.

There is a growing concern that we may be entrusting too many crucial tasks and responsibilities to non-human entities, potentially leading to our increasing reliance on machines. At a 2009 conference attended by computer scientists, roboticists, and other researchers, experts voiced concerns about how criminals might exploit advanced technologies like artificial intelligence to hack information or impersonate people [source: Markoff]. The consensus from this conference, and similar discussions, seems to be that it’s essential to address these issues now and establish industry standards, even if the specific technological advances of the future remain unclear.

Marshall Brain, the founder of Mytour, has expressed concerns about the potential for widespread robot adoption in roles traditionally filled by humans, fearing it could lead to widespread job losses [source: Brain].

1: We Can Stop Climate Change

The retreating glaciers, such as those found in Canada’s Jasper National Park, are stark evidence of the ongoing effects of climate change.

George Rose/Getty Images

The retreating glaciers, such as those found in Canada’s Jasper National Park, are stark evidence of the ongoing effects of climate change.

George Rose/Getty ImagesIs global warming an unavoidable reality? Many scientists agree that it is, to some degree, and that our focus should now be on preventing major disasters and mitigating the effects. Some of the world’s top climatologists argue that humanity has already crossed the point of no return [source: Borenstein]. In 2007, the UN Intergovernmental Panel on Climate Change, a body of over 2,000 scientists, issued a grim warning, noting that global temperatures had already begun rising as early as 2001.

The effects of climate change are already evident, from the melting of glaciers to rising sea levels, which are intensifying cyclones in South Asia. These changes are expected to have particularly devastating consequences for hundreds of millions of people in the developing world [source: Kanter]. The island nation of Tuvalu now faces high tides that threaten to engulf the entire country.

Even if no more greenhouse gases were released from today onward, global temperatures would still rise by 1 degree Fahrenheit by mid-century due to the lingering carbon dioxide already in the atmosphere, which can remain there for decades [source: Borenstein]. Countries like Norway are attempting to address this by injecting CO2 into decommissioned underground oil wells. Moreover, a dangerous temperature rise of 3 to 6 degrees Fahrenheit by the end of the century remains a distinct possibility [source: Borenstein].

The critical question for many is whether it’s possible to limit the warming to avoid the most catastrophic outcomes. While encouraging grassroots environmental efforts is essential, international cooperation is crucial—and that has been slow to materialize, especially with key players like the United States, China, and India. Experts stress that we must also begin to plan for the inevitable warming-related disasters, such as assisting coastal regions, creating rapid-response teams for wildfires, and preparing for extreme heat waves.