Check out this video on Mytour to see Intel's shrinking microprocessor size. Science experts Adam Savage and Jamie Hyneman demonstrate how diminishing transistor sizes are enabling us to pack supercomputer-like performance into smaller, sleeker devices. PodTech Networks

Check out this video on Mytour to see Intel's shrinking microprocessor size. Science experts Adam Savage and Jamie Hyneman demonstrate how diminishing transistor sizes are enabling us to pack supercomputer-like performance into smaller, sleeker devices. PodTech NetworksAs technology advances and we move away from personal computing, the idea of 'supercomputers' often conjures up images of massive Cray and IBM systems from the Baby Boomer era, complete with blinking lights, cranks, and levers. However, across the globe, large-scale parallel systems—sometimes resembling these older supercomputers more than you might expect—are still being developed.

We're all familiar with Moore's Law, which asserts that computer chip power doubles every 18 to 24 months. But this principle extends beyond laptops and desktops; it impacts all of our electronic devices, including processing speed, sensor sensitivity, memory, and even the pixels in our cameras or phones. Despite this rapid advancement, chips can only shrink and grow so powerful before quantum mechanical effects interfere, causing some experts to suggest that Moore's Law may slow in the coming decade as we approach the limits of current materials [source: Peckham].

Our phones and tablets showcase the incredible shrinking of computing power into portable devices, but this is only the tip of the iceberg. The real action is behind the scenes, where the "cloud" requires faster data processing and computation to keep up with the ever-growing volume of data we consume. From streaming high-def movies to accessing real-time weather, traffic, and satellite data, supercomputers remain at the heart of this data-driven future.

5: Exaflops and Beyond!

The miniaturization of chip components is just part of the equation. On the flip side, we have the mighty supercomputers: custom-made for raw power. In 2008, IBM's Roadrunner smashed the petaflop milestone, achieving one quadrillion operations per second [source: IBM]. (FLOPS stands for "floating-point operations per second," a key metric used to evaluate supercomputers, particularly for scientific computations like those discussed here.)

In scientific terms, a petaflop represents 10^15 operations per second. An exaflop system — projected to arrive by 2019 — will perform at 10^18, a thousand times faster than the current petaflop systems [source: HTNT]. As of June 2011, the top 500 supercomputers worldwide collectively fell short of 60 petaflops in total power. Looking ahead, zettaflops will take us to 10^21 operations per second by 2030, and yottaflops will push the limit further at 10^24 [source: TOP500].

So, what do these colossal numbers mean in practical terms? For one, experts predict that by 2025, we'll be able to simulate the human brain in its entirety. Within a decade, zettaflop computers should be capable of accurately forecasting the global weather system up to two weeks in advance.

Since 1993, the TOP500 project has been the go-to resource for tracking supercomputing trends. Every six months, the project releases an updated list of the world's most powerful supercomputers, ranked based on a set of criteria, including the Linpack benchmark, which tests how a supercomputer handles dense linear equations and measures response times. Each system's specifications and intended uses are also included.

In 2012, at the International Supercomputing Conference in Hamburg, Germany, a U.S. supercomputer reclaimed the top spot for the first time since 2009: IBM's Sequoia, housed at the Department of Energy's Lawrence Livermore National Laboratory, posted a Linpack score of 16.32 petaflops [source: Perry]. Sequoia dethroned Japan's K Computer, which had previously surpassed China's Tianhe-1A a year earlier, with a score exceeding 10 petaflops [source: TOP500].

4: Green Supercomputers

Cooling fans for CPUs are a common feature, as computers often spend more than half their energy just managing their internal temperature. Engineers working on supercomputers are continuously searching for more efficient ways to maintain a cooler system.

©iStockphoto/Thinkstock

Cooling fans for CPUs are a common feature, as computers often spend more than half their energy just managing their internal temperature. Engineers working on supercomputers are continuously searching for more efficient ways to maintain a cooler system.

©iStockphoto/ThinkstockAll that power comes at a price. If you've ever experienced a failed heat sink causing your desktop to crash, or had a laptop that became uncomfortably hot on your lap, you understand the trade-off: computers consume a lot of energy that generates heat. One of the major hurdles for supercomputer developers is finding ways to operate these powerful systems without risking hardware failures or contributing to environmental degradation. After all, weather simulations are used to track carbon emissions and temperature variations, so it would be counterproductive to exacerbate the very issues climatologists are trying to solve!

A computer system is only as effective as its performance under stress, so keeping those hot circuits cool is essential. In fact, more than half of the energy consumed by supercomputers is directed toward cooling. As the future of supercomputing intertwines with other leading-edge sciences and progressive agendas, ecological concerns are becoming a critical issue for engineers in high-performance computing [source: Jana]. Green solutions and energy efficiency are integral parts of every supercomputer project, and Sequoia's impressive energy efficiency was a key factor in the excitement surrounding IBM's debut of the supercomputer.

From utilizing 'free air' for cooling—essentially finding ways to bring in outside air into the system—to hardware designs that maximize surface area, scientists are pushing innovation not only for speed but for efficiency. One particularly fascinating concept that several teams are experimenting with is submerging the system in a conductive liquid that absorbs heat, which is then pumped through the structure housing the computer banks. This method of heating water and rooms while simultaneously cooling the equipment has broad applications beyond supercomputer sites, and the projects featured on the TOP500 list are taking these ideas very seriously.

Addressing ecological concerns and improving efficiency isn't just beneficial for the planet; it's essential for the machines to function. While this may not be the most glamorous aspect of the research, it's crucial for the future we're promised – futuristic trends and all!

3: The Artificial Brain

Looking ahead to 2025-2030, supercomputers are expected to map the human brain [source: Shuey]. A 1996 estimate from a Syracuse University scientist suggested our brain's memory capacity ranges from one to 10 terabytes, with an average around three [source: MOAH]. Though this comparison isn't perfect, as our brains don't function like computers, the future holds the promise that computers could replicate our brain's processes within the next 20 years!

Just as supercomputers are helping map the human genome, predict medical issues, and find solutions to genetic diseases, accurate models of the brain will revolutionize diagnosis, treatment, and our understanding of human thought and emotion. With advanced imaging tech, doctors could identify problem areas, simulate treatments, and solve questions that have long stumped humanity. Implantable chips could help regulate brain chemicals like serotonin to address mood disorders, and severe brain injuries may be reversed with these advancements.

In addition to medical breakthroughs, there's the exciting realm of artificial intelligence (AI). Even with current computing power, we already have some impressive AI – think personalized recommendations for movies, books, and music based on algorithms or virtual assistants like Siri. But true AI, with human-like mental complexity, would take things further. Picture a Web MD that interacts like a doctor, providing expert-level answers. This concept could expand far beyond healthcare, creating virtual experts who can assist with anything you need in a relaxed, conversational style.

2: Weather Systems and Complex Models

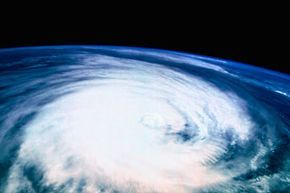

As supercomputers continue to evolve, their ability to generate predictive weather models will skyrocket. Rather than waiting for weather events like storms to unfold and then analyzing the resulting data, we will be able to forecast such events long before they occur.

As supercomputers continue to evolve, their ability to generate predictive weather models will skyrocket. Rather than waiting for weather events like storms to unfold and then analyzing the resulting data, we will be able to forecast such events long before they occur.By 2030, it is hoped that zettaflop supercomputers will have the capability to simulate the Earth's weather systems with near-perfect accuracy, offering predictions at least two weeks in advance [source: Thorpe]. These simulations will cover the entire planet and its ecosystems, delivering both local and global forecasts at the touch of a button. While this may seem like a niche application, the scale of this data is vast and has the potential to revolutionize how we interact with weather forecasting.

Earth's climate is so intricate that it's often used to illustrate chaos theory, one of the most complex systems we can understand: "Does the flap of a butterfly's wings in Brazil set off a tornado in Texas?" asked Philip Merilees in 1972 (though the idea of meteorological complexity traces back to 1890 with Poincaré) [source: Wolchover]. When viewed in this light, it's hard to imagine any system more intricate on a global scale than the weather patterns we rely on every day.

The impact of weather forecasting extends far beyond personal concerns like vacations or weddings. It plays a crucial role in food production, large-scale scientific endeavors like space missions or polar expeditions, and even in preemptive disaster relief, saving lives and enabling us to respond proactively to natural events.

Weather systems are just the starting point. Once we can replicate weather patterns with precision, we can take the leap into modeling other vast and intricate systems. Tomorrow’s supercomputers, built from silicon and powered by electricity, could bring entire planets and worlds to life.

1: Simulated Worlds

Many of us are familiar with online multiplayer gaming, and recall when virtual worlds like 'Second Life' were extremely popular. Virtual realities have been a fascination for at least a century. With the rise of supercomputing, these virtual spaces are poised to serve purposes far beyond just entertainment.

While we are certain to see astonishing progress similar to 'Second Life' and 'The Matrix', the more thrilling and practical application of this technology lies in data overlays in our everyday lives. Guided tours, live GPS navigation, and online reviews are just the beginning. Imagine accelerating weather simulations, incorporating unpredictable factors like human behavior, and testing ideas in civil engineering, city design, and food security.

Supercomputers will not have to make assumptions, although they'd excel at it. They can analyze information from every conceivable source, from trending social media posts to traffic data and energy consumption, creating real-time models that not only manage current conditions but plan for the future. Problems like power outages, fuel shortages, and congestion during major events like the Olympics will soon be a thing of the past.

As WiFi Internet becomes ubiquitous, set to replace 4G connectivity across the globe, the immersive simulated world of the future will soon mirror our reality—only improved. It will be more insightful, tailored to our needs, and most importantly, it will empower both individuals and societies to utilize information to its fullest potential. And all of this dynamic and transformative power will be made possible by the cutting-edge supercomputers we're only beginning to develop.