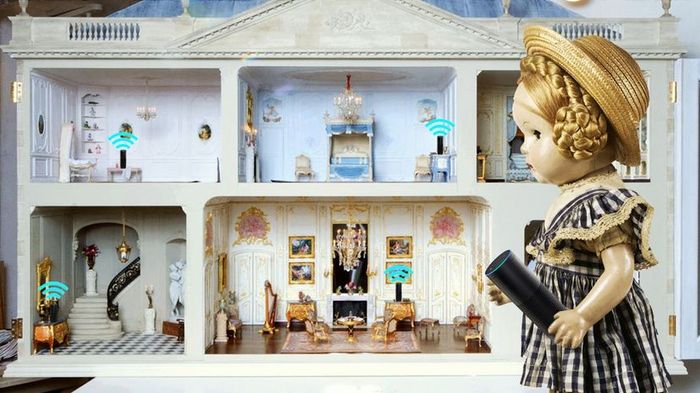

Alexa, Siri, and other AI-powered personal assistants are steadily integrating into our daily routines. But what happens when these devices go off-script? Barcroft Media/Amazon/De Agostini/G. Cigolini/Getty Images

Alexa, Siri, and other AI-powered personal assistants are steadily integrating into our daily routines. But what happens when these devices go off-script? Barcroft Media/Amazon/De Agostini/G. Cigolini/Getty ImagesWe're living in the age of the AI assistant. Personalities like Siri, Cortana, Alexa, and others are becoming integral to our lives, typically helping us with information and tasks. However, sometimes they misbehave and do things we didn't expect, like purchasing a dollhouse.

This is exactly what happened with Amazon's Alexa, a personal assistant found on the Amazon Echo and similar devices, according to reports from San Diego, California. During a recent broadcast on CW6 News, the anchors discussed a story about a 6-year-old girl from Texas who used her parents' Echo to order a dollhouse and 4 pounds (1.8 kilograms) of cookies. That's when things took a turn.

While the news anchors covered the story, they unintentionally activated several Echo devices in viewers' homes. Below is the CW6 report on the original broadcast:

The devices in question dutifully processed an order for a dollhouse. The dollhouse market briefly surged. It remains unclear how many, if any, of those orders were completed as actual purchases.

This amusing incident highlights the difficulties companies face when designing voice-activated digital assistants. Amazon's approach is to enable online orders through Alexa by default, which makes sense from Amazon's standpoint. While people can change their Alexa device settings to require verification before purchasing, the onus for this adjustment lies with the user, not Amazon.

I can confirm that Google Home occasionally responds to television sounds as well. I own a Google Home, and it has interrupted me a few times while I was watching TV. Thankfully, it hasn't placed any orders. Given that I often watch 'It's Always Sunny in Philadelphia,' I’m particularly relieved. The worst I've encountered is Google Home objecting to what it thought I said.

A more serious concern from this story is the realization that these devices are constantly listening to their environment. They must listen in order to respond when you issue a command. Questions remain about how much of our conversations are stored in these devices’ memory and how secure they are from potential hacking. It's easy to imagine an unsecured AI assistant becoming a microphone that records everything happening in your home. Wired published a fascinating article on this topic in December, if you’re interested in further reading.

In the future, we might see more companies adopt default authentication measures to prevent accidental purchases or other unintentional actions. For instance, it would be awkward if your AI assistant booked a taxi every time it heard someone on TV do so. Alternatively, voice recognition technology may improve to allow these devices to distinguish between their owners’ voices and those of others.

For now, it's wise to do some research on any voice-activated device before incorporating it into your life. For some, the privacy risks may feel too overwhelming. For others, a few unexpected dollhouse orders might seem like a small price for the convenience of digital assistance.