A computer's operating system enables it to manage multiple processes simultaneously. gilaxia/Getty Images

A computer's operating system enables it to manage multiple processes simultaneously. gilaxia/Getty ImagesWhen you power up your computer, it might feel like you’re the one in command. The mouse is at your disposal, ready to bring up your music library or web browser with a simple click. While you might feel like you're the director behind the screen, there's a lot more happening beneath the surface, with the operating system silently managing the complex tasks.

Microsoft Windows runs the majority of computers used for both professional and personal tasks. Mac computers come with macOS pre-installed. Linux and UNIX are favored for digital content servers, but many of their distributions are becoming increasingly popular for day-to-day computing. No matter which one you pick, without an operating system, you won’t get very far.

Other devices run their own unique operating systems. As of the 2020s, Google's Android and Apple's iOS dominate the smartphone OS market, although several manufacturers have created their own, generally based on Android. Apple ships iPads with iPadOS, Apple Watches with watchOS, and Apple TV runs tvOS. Additionally, numerous other devices, such as Internet of Things gadgets, smart TVs, and car infotainment systems, all operate on their own tailored OS. And that's just scratching the surface, as self-driving cars require even more sophisticated systems.

An operating system's primary role is to organize and manage both hardware and software to ensure that the device operates in a manner that is both adaptable and consistent. In this article, we'll explain what qualifies as an operating system, delve into how the OS of your desktop functions, and explore ways to take control of the various operating systems around you.

What Is an Operating System?

A Windows 11 logo appears on a smartphone screen with a Microsoft website in the backdrop. Windows remains one of the most widespread operating systems.

Pavlo Gonchar/SOPA Images/LightRocket via Getty Images

A Windows 11 logo appears on a smartphone screen with a Microsoft website in the backdrop. Windows remains one of the most widespread operating systems.

Pavlo Gonchar/SOPA Images/LightRocket via Getty ImagesNot every computer needs an operating system. For instance, the computer inside your microwave oven doesn't require one. It has a specific task to perform, with a simple interface (a keypad and a few preset buttons) and basic, unchanging hardware to manage. In this case, a complex operating system would be an unnecessary burden, significantly increasing both development and manufacturing costs while complicating something that doesn't need to be complicated. Instead, the microwave’s computer runs a single, permanent program called an embedded system at all times.

For more advanced devices, an operating system provides the capability to:

- Fulfill a range of functions

- Engage with users in more sophisticated ways

- Adapt to evolving needs

All desktop computers require an operating system. The most prevalent include the Windows series developed by Microsoft, the Macintosh systems developed by Apple, and the UNIX family, created by a long list of individuals, corporations, and collaborators. In addition, there are hundreds of other operating systems designed for specialized applications, including mainframes, robotics, manufacturing, real-time control systems, and more.

Any device with an operating system typically allows users to modify its functionality. This is no mere coincidence; one reason operating systems use portable code instead of fixed hardware circuits is to allow for changes or updates without having to discard the entire device.

For a desktop user, this means you can install a security update, apply a system patch, add a new application, or even upgrade to an entirely new operating system without the need to toss out your computer and buy a new one just to make changes. As long as you understand how the operating system functions and how to interact with it, you can often modify its behavior in various ways.

No matter which device an operating system powers, what exactly can it do?

Operating System Functions

At its core, an operating system does two main things:

- It handles the management of hardware and software resources within the system. In computers, tablets, and smartphones, these resources include the processors, memory, disk space, and more.

- It provides a stable, uniform interface for applications to interact with the hardware, without needing to know all the intricate details of the hardware itself.

The operating system governs every task your computer performs and allocates system resources to enhance overall performance.

The operating system governs every task your computer performs and allocates system resources to enhance overall performance.The first critical task, managing hardware and software resources, is crucial since various programs and input methods vie for the attention of the central processing unit (CPU) and demand memory, storage, and input/output (I/O) bandwidth. In this role, the operating system acts as a mediator, ensuring each application receives the resources it requires while ensuring harmonious interaction between all applications and maximizing the system's limited capacity for the benefit of all users and applications.

The second essential task, ensuring a consistent user interface, becomes particularly important when there are multiple computers running the same operating system or when the hardware in the computer is subject to change. A consistent application programming interface (API) enables developers to write applications on one computer and trust that they will work on other machines of the same type, even if the memory or storage capacity differs between them.

Even with a one-of-a-kind computer, an operating system guarantees that applications continue to function as hardware upgrades and updates are made. This is because the operating system, not the application, is responsible for managing the hardware and allocating its resources. A key challenge for developers is maintaining the flexibility of operating systems to support hardware from the thousands of vendors that manufacture computer components. Modern systems can handle thousands of printers, disk drives, and other peripherals in virtually any combination.

Types of Operating Systems

This privacy notice is found on an iPhone 12 running the iOS 14.5.1 operating system. iOS is the system powering Apple devices. Christoph Dernbach/picture alliance via Getty Images

This privacy notice is found on an iPhone 12 running the iOS 14.5.1 operating system. iOS is the system powering Apple devices. Christoph Dernbach/picture alliance via Getty ImagesOperating systems are categorized into several types depending on the kinds of computers they control and the types of applications they support. These categories include:

- Real-time operating system (RTOS) - Real-time operating systems are used for controlling machinery, scientific instruments, and industrial systems. Typically, an RTOS has minimal user-interface capabilities and lacks end-user utilities because the system is designed as a "sealed box" upon delivery. The key function of an RTOS is to manage computer resources so that each operation takes exactly the same time every time it occurs. In complex machines, having a part move faster when resources are available could be as disastrous as having it not move at all because the system is overloaded.

- Single-user, single task - As the name suggests, this operating system is meant for one user performing one task at a time. A classic example is MS-DOS, which is a single-user, single-task operating system.

- Single-user, multitasking - This is the type of operating system commonly used on desktop and laptop computers today. Examples include Microsoft Windows and Apple’s macOS, which allow a single user to run multiple applications at once. For instance, a Windows user could write a document, download a file, and print an email, all simultaneously.

- Multiuser - A multiuser operating system lets many users share a computer’s resources at the same time. The system ensures that the needs of all users are met and that each program has the necessary resources without one user’s activities affecting the others. Unix, VMS, and mainframe operating systems like MVS are examples of multiuser operating systems.

- Distributed - These operating systems manage several computers simultaneously. Rather than relying on one powerful computer, distributed operating systems divide large tasks across multiple smaller computers. You can find such systems in massive server farms, but hobbyists and educators also build their own distributed systems with inexpensive machines and even repurposed gaming consoles.

It’s important to distinguish between multiuser operating systems and single-user systems that enable networking. For example, in an office where a system administrator dictates which software can or cannot be installed on your computer, you are using a single-user system connected to a network. You might print documents on a shared printer or access a file server where your department's files are stored.

With various types of operating systems in mind, let’s now explore the fundamental functions that an operating system provides.

Computer Operating Systems

When powering on a computer, the first set of instructions executed is typically stored in the computer's firmware, known as the boot ROM. On a typical PC, this may be the basic input/output system (BIOS), or for newer machines, the unified extensible firmware interface (UEFI). This firmware checks the system's hardware to confirm everything is working correctly and, in the case of UEFI, ensures that the boot software is valid and secure. Upon passing these checks, the firmware proceeds with the boot process.

The bootstrap loader, also called the boot loader, is a small program with one job: loading the operating system into memory to initiate its operation. Essentially, the bootstrap loader configures small driver programs that connect and control various hardware subsystems. It organizes memory to hold the operating system, user data, and applications, while establishing the necessary data structures—signals, flags, and semaphores—that facilitate communication within and between the computer's subsystems and applications. Afterward, it hands control over to the operating system.

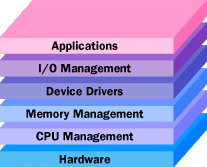

The operating system’s tasks can generally be divided into several categories:

- Processor management

- Memory management

- Device management

- Storage management

- Application interface

- User interface

- System security management

These core tasks are central to nearly every operating system. Now, let’s dive into the specific tools the operating system employs to execute each of these functions.

Processor Management

At the core of processor management lie two key challenges:

- Ensuring that every process and application gets sufficient processor time to function efficiently

- Maximizing processor cycles for meaningful tasks

The fundamental unit that the operating system manages while scheduling tasks for the processor is the process. Applications include at least one process, and each process contains at least one thread. Threads execute segments of the process's code, and operating systems oversee even these smaller units, providing the necessary resources to ensure proper functioning.

It’s easy to equate a process with an application, but this oversimplifies the relationship between processes, the operating system, and the hardware. What you see as an application (whether it's a word processor, a spreadsheet, or a game) is a process, but that application might trigger several additional processes, such as those handling communication with other devices or computers. Many processes also run invisibly in the background. For example, an operating system may have numerous processes operating silently to manage network connectivity, memory, disk, virus scans, and more.

A process, therefore, is a software component that performs a task and can be controlled—either by the user, by other applications, or by the operating system itself.

It is processes, not applications, that the operating system controls and schedules for execution by the CPU. In a system that runs a single task at a time, the scheduling process is fairly simple. The operating system lets the application run and only pauses execution briefly to handle interrupts and user input.

Interrupts are special signals that are sent to the CPU by hardware or software. It’s like a participant in a meeting suddenly raising their hand to get the CPU’s attention. Occasionally, the operating system prioritizes processes so that some interrupts are masked — meaning it ignores them temporarily to finish a particular task as quickly as possible. However, some interrupts (like those caused by errors or memory problems) are so crucial they can’t be ignored, such as when the system alerts you that your laptop’s battery is nearly dead. These non-maskable interrupts (NMIs) must be addressed immediately, no matter what other tasks are in progress.

In a single-tasking system, interrupts can complicate the process execution, but in a multitasking system, the operating system's task becomes even more complex. It must manage the execution of applications in such a way that you perceive several tasks happening at once. This is a challenge because each CPU can handle only one task at a time. Even though modern multicore processors and multiprocessor systems can handle more work, each core still manages only one task at any given time.

To create the illusion of simultaneous tasks, the operating system must frequently switch between processes thousands of times per second. Here's how the system makes this work:

- Each process occupies a certain amount of RAM and makes use of registers, stacks, and queues (types of storage) within both the CPU and the operating system's memory.

- When two processes are running concurrently, the operating system allocates a specific number of CPU cycles to one process.

- Once those cycles are up, the operating system saves the state of the registers, stacks, and queues used by the process, noting where the process left off.

- The operating system then loads the state of the second process, including its registers, stacks, and queues, and gives it a set number of CPU cycles.

- When those cycles are finished, the operating system saves the second process's state and reloads the first process to continue its execution.

Process Control Block

To track the state of each process during these switches, the operating system stores the relevant information in a structure known as a process control block. This block typically holds:

- An ID number that uniquely identifies the process

- References to the program and data locations where the process was last active

- Contents of the registers

- Status of various flags and switches

- References to the upper and lower memory bounds required by the process

- A list of files currently opened by the process

- The process's priority level

- The status of all I/O devices that the process relies on

Each process is associated with a specific status. Many processes do not consume any CPU time until they receive some input. For example, a process may be waiting for a user keystroke. While waiting, it doesn't require any CPU time and is considered to be in a "suspended" state. Once the keystroke is received, the operating system updates its status. The process's status may change from pending to active, or from suspended to running, and the operating system uses the information in the process control block to manage the task-switching operations accordingly.

This process swapping occurs automatically, without requiring user interaction. Each process is allocated enough CPU time to complete its task within a reasonable time. Problems arise when the user tries to run too many processes simultaneously. The operating system itself requires CPU cycles to manage the saving and swapping of registers, queues, and stacks for all running processes.

Each process requires a designated memory allocation, but the operating system must maintain a balance. As more applications are opened, each application is allocated less memory to function. If too many processes are initiated and the operating system hasn't been optimized, the system may use an excessive amount of CPU cycles for swapping processes instead of actually running them. This issue, known as thrashing, typically requires user intervention to stop unnecessary processes and restore order. It's akin to trying to juggle too many tasks at once. Once you reach your limit, it becomes overwhelming. That’s what thrashing feels like for a computer.

Developers design systems to avoid thrashing, but you can help by adding more RAM to your system and closing unused applications. This will allow your operating system to better manage resources and ensure smoother performance.

So far, all our scheduling discussions have focused on a single CPU. However, in systems with multiple CPUs, the operating system must distribute the workload among the available processors, aiming to balance the demands of processes with the cycles each processor can handle. Asymmetric operating systems assign one processor for their own tasks and delegate application processes to the other CPUs. Symmetric operating systems divide the workload across processors, ensuring a balance of demand and availability, even when the operating system is the only program running. These systems share the available memory. This concept also extends to using multiple processor cores within the same chip.

Depending on the computer and operating system you use, you may already be utilizing symmetric processing right now.

When the operating system is the sole software requiring execution, processors are not the only resource that needs coordination. Memory management becomes the next critical factor in ensuring smooth operation of all processes.

Memory Storage and Management

IT expert Mario Haustein is seen working with a Linux-based operating system at the computer center of the Technical University in Chemnitz, Germany, on March 8, 2017. Photo by Jan Woitas/picture alliance via Getty Images.

IT expert Mario Haustein is seen working with a Linux-based operating system at the computer center of the Technical University in Chemnitz, Germany, on March 8, 2017. Photo by Jan Woitas/picture alliance via Getty Images.When the operating system is tasked with managing the computer's memory, it must address two major responsibilities:

- Each process needs sufficient memory to run, ensuring that it does not overlap with or get interfered with by another process.

- The various memory types within the system must be allocated effectively so each process can function optimally.

The first responsibility requires the operating system to establish memory boundaries for software types and individual applications.

For illustration, let’s consider a hypothetical small system with 1 megabyte (1,000 kilobytes) of RAM. During the startup sequence, the operating system allocates memory for itself. Imagine that it requires 300 kilobytes to run. Afterward, the system accesses the lower portion of the RAM pool and assigns 200 kilobytes to the necessary driver software to manage the hardware components. As a result, once the operating system is fully loaded, 500 kilobytes of memory remain for running application processes.

When applications are loaded into memory, the operating system allocates memory to them. As a new application opens, the system reclaims some of the memory from other running applications to ensure the new one has enough resources. Once that’s settled, the bigger concern is what happens when the 500-kilobyte space for applications is completely occupied.

In most systems, it's possible to expand memory beyond its initial limits. For example, upgrading the RAM in your computer from 8 to 16 gigabytes is a common change. However, not all data an application stores in memory is actively in use. Since a processor can access only one memory location at a time, much of the RAM sits idle. To manage this, the operating system frequently exchanges the unused data for those parts that are in use. This ensures each process has its designated space without interfering with others. This method is known as virtual memory management.

Disk storage is just one type of memory that the operating system has to handle, and it is the slowest. When comparing memory types by speed, the hierarchy looks like this:

- High-speed cache: This refers to small, quick-access memory that the CPU uses through the fastest connections. Cache controllers anticipate which data the CPU will need next and preload it from the main memory into the high-speed cache to optimize system performance.

- Main memory: This is the RAM, usually measured in gigabytes, that you see when purchasing a computer.

- Secondary memory: Typically found in the form of a hard drive (HDD) or solid-state drive (SSD), this memory acts as virtual RAM, managed by the operating system.

The operating system must effectively manage the demands of various processes while balancing the available memory types. It moves data around in chunks called pages to different memory locations as the process schedule requires.

Device Management

The connection between the operating system and most hardware, except for what's directly on the computer’s motherboard, passes through specialized programs known as drivers. Drivers primarily serve as translators, converting the hardware's electrical signals into the high-level programming languages that the operating system and applications use. They take the data defined by the operating system as a file and convert it into a stream of bits, directing them to specific storage locations or translating them into laser pulses for printing.

Given the wide variety of hardware, driver programs vary in function. Most of them activate when the device is called upon and perform similarly to other processes. To ensure that hardware is ready for immediate use, the operating system often prioritizes drivers, assigning them high-priority tasks that allow resources to be released quickly and prepared for the next use.

One of the key reasons drivers exist separately from the operating system is that they allow new functionalities to be added to hardware subsystems without needing to modify, recompile, or redistribute the operating system itself. Many drivers are developed or purchased by the hardware manufacturers rather than the operating system publishers, giving the manufacturers the ability to update and improve the system’s input/output capabilities.

Operating system developers also create device drivers to update computers. While major companies like those behind Windows and macOS are more likely to keep their drivers up-to-date, Linux and other open-source OSes often depend on volunteers from the community. These developers donate their time and expertise to create drivers for various systems and peripherals, helping to ensure the functionality of diverse hardware.

The management of input and output mainly involves handling queues and buffers, which are specialized storage systems that receive a stream of bits from a device — such as a keyboard or a serial port — temporarily hold them, and then release them to the processor at a rate it can handle. This is especially critical when multiple processes are running simultaneously, consuming processor time. The operating system instructs the buffer to continue gathering input from the device but to pause sending data to the processor while the associated process is suspended. Once the process becomes active again, the operating system prompts the buffer to send the accumulated data. This system allows a keyboard or modem to manage high-speed communication with external users or computers, even when the processor can't handle the input continuously.

The primary responsibility of an operating system is managing all the computer system's resources. In real-time operating systems, this may be almost the entire function. However, for other operating systems, the key task is to provide a straightforward and consistent way for both applications and users to tap into the power of the hardware, which forms the core of their purpose.

Application Program Interfaces

Much like drivers offer a pathway for applications to utilize hardware subsystems without needing to understand the specifics of their operation, application program interfaces (APIs) allow programmers to access the functions of the computer and operating system without getting bogged down in the minutiae of how the CPU works. To illustrate the importance of this, let’s consider a scenario where a programmer is tasked with creating a hard disk file for storing data.

A programmer developing an application to record data from a scientific instrument might want to give the user the option to name the created file. The operating system might offer an API function called MakeFile for file creation. The programmer would insert a line of code in the program like the following:

In this example, the command directs the operating system to create a file that supports random access to its data (indicated by the 1; alternatively, 0 might be used for a serial file), allows the user to define the file's name (%Name), and sets its size to vary based on the amount of data stored within it (indicated by the 2; alternatives might include zero for a fixed-size file or 1 for a file that expands as data is added but does not shrink when data is removed). Now, let’s explore how the operating system turns this instruction into action.

The operating system sends a request to the disk drive to determine the location of the first available empty storage spot.

Using this information, the operating system creates an entry within the file system, specifying the starting and ending locations of the file, its name, type, archived status, user permissions for viewing or modifying the file, as well as the date and time when the file was created.

Because the programmer wrote the application to utilize the API for disk storage, they don’t need to track the specific instruction codes, data types, and response codes for every type of hard disk or tape drive. The operating system, interfacing with drivers for various hardware components, handles the evolving details of the hardware. The programmer only needs to write the API code and rely on the operating system to manage the rest. However, using APIs may present hackers with opportunities to exploit the application for their own gain and potentially gain unauthorized access to the system. This doesn't mean APIs are inherently bad, but developers must ensure they avoid vulnerabilities and promptly address them when identified.

APIs have become one of the most hotly contested territories in the computer industry over the past few years. Companies recognize that developers who utilize their APIs can ultimately gain control and profits over a specific segment of the market. Developers know that offering applications, such as readers or viewers, for free can drive consumers to adopt their software, although they often expect other developers to pay royalties to integrate their software’s functionality. Many companies, however, make their APIs publicly available for free.

User Interface

Just as an API provides a standardized way for applications to access system resources, a user interface (UI) structures the interaction between the user and the computer. Over the last decade, most advancements in UI have focused on the graphical user interface (GUI), with macOS by Apple and Windows by Microsoft leading both the development and market share.

Most (but not all!) Linux distributions feature a GUI. For those that do, the organization responsible for the release typically selects the desktop environment for the system. However, Linux users have the option to change the environment to suit their preferences. Some of the popular desktop environments for Linux include Cinnamon, GNOME, KDE, and Xfce.

UNIX is often linked with a command line interface (CLI), or shell, which provides more flexibility and power than a GUI. A shell interface is text-based and requires the user to type commands, which can be intimidating to those accustomed to graphical, point-and-click interfaces. The Korn Shell and the C Shell are text-based interfaces that introduce valuable utilities, but their main function is to simplify user interaction with the operating system's commands. Nevertheless, UNIX users can also employ a GUI if they prefer. One advantage for developers is the ability to open multiple shell windows simultaneously to work on various tasks at once.

Windows, macOS, and Linux all provide shell or terminal applications for users who wish to interact with a command line interface.

It’s essential to remember that the user interface in these systems is a set of programs that acts as a layer above the core operating system. The fundamental functions of an operating system — including the management of the computer system — reside in the kernel of the operating system. The relationship between the operating-system kernel and the user interface, utilities, and other software components is what distinguishes different operating systems and will continue to shape their evolution.

Operating System Development

For desktop systems, network access has become such a standard feature that it's nearly impossible to discuss an operating system without mentioning its connectivity to other computers and servers. Operating system developers now commonly use the internet as the primary means to deliver critical updates and bug fixes. While it's still possible to receive these updates via DVD or flash drive, this method has become increasingly rare.

One of the key questions about the future of operating systems revolves around whether a particular model of software distribution can create an operating system that serves both corporate and consumer needs effectively.

Linux, the operating system built and distributed according to the principles of open source, has made a profound impact on the landscape of operating systems. Most software, including systems, drivers, and utilities, is developed by commercial companies that offer executable versions of their programs, which are typically closed and cannot be modified or inspected, known as closed source. On the other hand, open source requires the sharing of the original source code, allowing it to be studied, modified, and improved, with these changes then being freely distributed. In the realm of desktop computing, this model has led to the creation and widespread availability of many valuable applications such as the image editing program GIMP, the popular office suite LibreOffice, and the widely-used web server Apache.

Many consumer devices, like cell phones, intentionally hide access to the operating system from the user, largely to prevent accidental tampering or deletion. However, they often provide access through a hidden "developer's mode" or "programmer's mode", which allows modifications if you manage to find it. These systems are often designed to limit the extent of changes that can be made, restricting users to a narrow range of adjustments.