Gordon Moore, co-founder of Intel, is the Moore behind Moore's Law.

Photo by AP/Paul Sakuma

Gordon Moore, co-founder of Intel, is the Moore behind Moore's Law.

Photo by AP/Paul SakumaIn 1965, an article was published in Electronics, written by Dr. Gordon E. Moore, then the research and development director at Fairchild Semiconductor. Moore's article was titled 'Cramming more components onto integrated circuits.' He observed that semiconductor companies, such as Fairchild, were able to double the number of components on a square inch of silicon every 12 months.

This process represents a form of exponential growth. A chip made in 1964 with a square-inch (6.5 square-centimeter) size would have half the components – like transistors – as a chip produced in 1965. Moore predicted that this trend would persist indefinitely, until chip makers eventually reached fundamental barriers preventing further advancement.

Moore's observation was based on two key factors: technological progress and the economics of mass production. In order for his prediction to hold true, we need to continuously innovate and find new ways to fit smaller components onto chips. However, it's equally crucial that the manufacturing process remains financially viable, or there won't be a way to sustain future advancements.

Today, we refer to Moore's observation as Moore's Law. Despite its name, it is not a literal law. There isn't a universal principle that dictates how powerful an integrated circuit will be at any given point. Nevertheless, Moore's Law has evolved into a sort of self-fulfilling prophecy, as chip manufacturers have been striving to match Dr. Moore's predictions from 1965. Whether driven by pride or competition, companies like Intel have invested billions in research and development to stay ahead.

So, is the nearly half-century-old observation still applicable today?

Quantum Leaps

This is an early model of the first transistor, a precursor to today's microprocessors, which now contain millions or even billions of transistors.

Image credit: AP Photo/Paul Sakuma

This is an early model of the first transistor, a precursor to today's microprocessors, which now contain millions or even billions of transistors.

Image credit: AP Photo/Paul SakumaEvery year, it seems, technology experts and journalists predict that Moore's Law is reaching its end. The components in modern microprocessors are so tiny that they are measured on a nanoscale—so small that individual elements are invisible, even under a powerful light microscope. At this size, physics starts to behave differently, and quantum mechanics take over from classical physics. It’s a strange world.

One of these strange phenomena is quantum tunneling. Imagine an electron not as a particle with a fixed position but as a wave. The electron’s probable location varies across the wave, resembling a bell curve. The narrow ends of the curve represent areas where the electron might be, but the middle is where it is most likely to be found.

When this wave approaches a barrier, like a gap between two conductors, one part of the wave may overlap the barrier and reach the other conductor. This means the electron could appear on the other side of the gap. If the potential exists, the electron might sometimes tunnel right through the barrier.

In the context of a microprocessor, this is problematic. You can think of a microprocessor as a complex network of roads for electrons. Transistors act as gates that regulate the flow of traffic. A closed gate should block electrons from passing through, but as the gates shrink to meet Moore's Law, issues like electron tunneling arise. This electron leakage can cause errors in calculations, leading the microprocessor to produce incorrect results.

Engineers have developed new methods to design transistors on the nanoscale over time, reducing the effects of quantum tunneling. This may involve using alternative materials within transistor gates or creating three-dimensional gates to enhance microprocessor performance. These innovations have helped companies maintain progress in line with Moore's Law. However, another reason Moore's Law persists is that we continuously adjust how we define it.

Redefining a Law

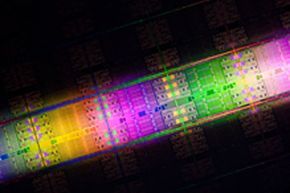

The Intel Xeon E7 processor family die features up to 10 cores and 2.6 billion transistors.

Courtesy Intel

The Intel Xeon E7 processor family die features up to 10 cores and 2.6 billion transistors.

Courtesy IntelWhen Moore's Law was first introduced, it referred to a specific concept: the number of distinct components on an integrated circuit doubles every 12 months. Today, we slightly adjust that timeline—industry experts often say it's every 18 to 24 months. And now, the focus isn't just on the number of components on a chip.

A common way to express Moore's observation is by saying that, over a period of time (typically between 18 and 24 months), the processing power of microprocessors doubles. This doesn’t always mean the number of transistors on a chip in 2012 is exactly double the amount in 2010. Instead, it often means we've found new, more efficient ways to design chips, boosting processing speed without requiring exponential growth.

By shifting the focus of Moore's Law to processing power rather than physical components, we've made the observation more adaptable. Companies can now combine advances in manufacturing technology with improved microprocessor architecture to continue keeping up with the law.

Is this redefinition of Moore's Law a form of cheating? And does it matter? Back in 1965, Moore predicted that a chip produced in 1975 would contain 65,000 transistors if his observation proved correct. Today, Intel manufactures processors with 2.6 billion transistors [source: Intel]. Modern computers process data much faster than their predecessors, and a home PC today holds as much power as some of the earliest supercomputers.

Another way to approach this question is to wonder whether it even matters if computers are twice as powerful today as they were two years ago. If we are truly in a post-PC era, as Steve Jobs once suggested, then faster microprocessors may not be as crucial as they once were. Today, the emphasis may be on energy efficiency and portability. If this is the case, Moore's Law might come to an end, not because we've reached a fundamental limit, but because pushing further could become economically unfeasible.

Some parts of the computer market will continue to demand the best in processing power. Gamers and those working with high-definition media often need, or at least want, as much processing power as possible. But what about the rest of us?

Even if our personal computers eventually transform into basic terminals relying on cloud access, there will still need to be a powerful computer with a strong processor somewhere. Perhaps we’ll see a new interpretation of Moore's Law, where processors take longer to double in power. Given its flexible history, it seems probable that Moore's Law will persist in some form for a while longer.