It turns out robots are pretty good at playing catch. Take Robot Justin, a humanoid with two arms developed by the German aerospace organization, Deutsches Zentrum für Luft- und Raumfahrt. It can autonomously perform tasks like catching balls or serving coffee. Check out more robot images.

© Michael Dalder/Reuters/Corbis

It turns out robots are pretty good at playing catch. Take Robot Justin, a humanoid with two arms developed by the German aerospace organization, Deutsches Zentrum für Luft- und Raumfahrt. It can autonomously perform tasks like catching balls or serving coffee. Check out more robot images.

© Michael Dalder/Reuters/CorbisBeing human is much simpler than building a human.

Imagine something as simple as tossing a ball with a friend in your front yard. When you break down the biological tasks needed to do this, it turns out to be anything but simple. You need sensors, transmitters, and effectors. You need to gauge how hard to throw based on the distance between you and your friend. You must also factor in sun glare, wind speed, and distractions nearby. Additionally, you must know how tightly to grip the ball and when to catch it. You need to consider possible scenarios: What if the ball goes over my head? What if it rolls into traffic? What if it breaks my neighbor's window?

These scenarios highlight some of the most difficult challenges in robotics, paving the way for our countdown. We've compiled a list of the top 10 hardest things to teach robots, ordered from "easiest" to "most difficult." These are the hurdles we must overcome to make the worlds envisioned by Bradbury, Dick, Asimov, Clarke, and other storytellers a reality—worlds where machines behave like people.

10: Blaze a Trail

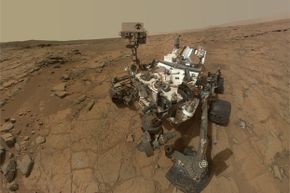

The ultimate test of navigation for a robot: Mars! So far, Curiosity has shown impressive skills in navigating the Martian surface.

Image courtesy NASA/JPL-Caltech/MSSS

The ultimate test of navigation for a robot: Mars! So far, Curiosity has shown impressive skills in navigating the Martian surface.

Image courtesy NASA/JPL-Caltech/MSSSMoving from one place to another seems like a simple task. We do it every day without thinking. But for a robot, navigation—especially in constantly changing environments or unfamiliar ones—can be quite complex. A robot needs to first understand its surroundings and then process the incoming data to make sense of it.

To tackle the first challenge, roboticists equip their machines with various sensors, scanners, cameras, and other advanced tools to analyze their environment. Laser scanners are increasingly popular, but they can’t be used in water, as it interferes with the light, reducing the sensor’s range. For underwater robots, sonar technology is an alternative, though it lacks the accuracy of laser scanners. Additionally, a set of integrated stereoscopic cameras can help robots visually interpret their surroundings.

Gathering environmental data is just part of the challenge. The real difficulty lies in processing that data and using it to make decisions. Many researchers program their robots to navigate using a pre-made map or by creating one as they go. This technique is known as SLAM—Simultaneous Localization and Mapping. Mapping refers to how a robot turns sensor data into a spatial representation, while localization focuses on how the robot determines its position within that map. These two processes must be performed together, and researchers have overcome the challenge using more powerful computers and sophisticated algorithms that estimate position based on probabilities.

9: Exhibit Dexterity

Twendy-One, a robot designed to assist elderly and disabled individuals in their homes, showcased its skill by delicately manipulating a drinking straw between its fingers at Waseda University in Tokyo on January 8, 2009.

© Issei Kato/Reuters/Corbis

Twendy-One, a robot designed to assist elderly and disabled individuals in their homes, showcased its skill by delicately manipulating a drinking straw between its fingers at Waseda University in Tokyo on January 8, 2009.

© Issei Kato/Reuters/CorbisFor years, robots have been used to pick up parcels and components in factories and warehouses. However, they typically avoid human interaction in these settings and mostly handle objects that have uniform shapes within organized spaces. But life is much more chaotic for any robot venturing outside factory walls. To function in homes or healthcare settings, a robot would require a sophisticated sense of touch to detect people and selectively choose an item from a messy pile.

These abilities are incredibly challenging for robots to master. In the past, scientists steered clear of using touch altogether, programming machines to fail if they came into contact with an object. However, recent years have seen notable progress in compliant designs and artificial skin technology. Compliance refers to the robot's flexibility—more flexible machines are considered more compliant, whereas rigid machines are less so.

In 2013, researchers at Georgia Tech developed a robotic arm with spring-based joints, allowing it to bend and interact with its environment similarly to the way a human arm would. They then coated it with 'skin' that could sense pressure or touch. Some robotic skins feature interlocking hexagonal circuit boards equipped with infrared sensors capable of detecting objects that come closer than one centimeter. Others utilize electronic 'fingerprints,' raised and textured surfaces that enhance grip and facilitate signal processing.

By combining these advanced robotic arms with enhanced vision systems, you can create a robot capable of performing delicate actions, such as offering a gentle touch or reaching into a cupboard to pick one object from a larger assortment.

8: Hold a Conversation

On July 26, 2013, mechatronics engineer Ben Schaefer engaged with the humanoid robot bartender Carl at the Robots Bar and Lounge in Germany. Created by Schaefer, Carl is also capable of conversing with patrons during their visits.

© Fabrizio Bensch/Reuters/Corbis

On July 26, 2013, mechatronics engineer Ben Schaefer engaged with the humanoid robot bartender Carl at the Robots Bar and Lounge in Germany. Created by Schaefer, Carl is also capable of conversing with patrons during their visits.

© Fabrizio Bensch/Reuters/CorbisAlan M. Turing, a pioneering figure in computer science, made a daring forecast in 1950: Machines would one day communicate so smoothly that distinguishing them from humans would be impossible. Unfortunately, robots (even Siri) have yet to meet Turing's predictions. The challenge lies in the complexity of speech recognition, which is vastly different from natural language processing—the mental process our brains use to comprehend words and sentences in conversations.

At first, scientists believed the solution was simple: just program the rules of grammar into a machine's memory. However, encoding a comprehensive grammatical guide for any language has proven to be unfeasible. Even teaching a machine the meanings of individual words turned out to be an enormous challenge. Consider the confusion between "new" and "knew," or "bank" (a financial institution) versus "bank" (the edge of a river). It turns out that humans make sense of these language quirks through cognitive abilities developed over millennia, and scientists have yet to break these abilities down into clear-cut, identifiable rules.

Many robots today utilize statistical methods for processing language. Researchers provide them with vast datasets of text, referred to as a corpus, and allow the machines to break this data into smaller pieces to identify which words tend to appear together and in what sequence. This helps the robot 'learn' language by analyzing patterns. For instance, the word "bat" followed by "fly" or "wing" would signal the flying mammal, while "bat" followed by "ball" or "glove" would suggest the sport.

7: Acquire New Skills

A robot demonstrates its writing abilities during a contest at China's Anhui University of Science and Technology, where college students created intelligent robots on November 16, 2012.

© Chen Bin/Xinhua Press/Corbis

A robot demonstrates its writing abilities during a contest at China's Anhui University of Science and Technology, where college students created intelligent robots on November 16, 2012.

© Chen Bin/Xinhua Press/CorbisImagine a person who's never played golf trying to learn how to swing a club. They could read a book and practice, or they could observe an experienced golfer performing the correct motions, which would likely be a faster and more efficient way to learn the skill.

Roboticists face a similar challenge when developing autonomous machines that can learn new skills. One approach, akin to the golfing example, is to break an activity down into steps and program these into the robot's system. However, this assumes that every part of the task can be fully described, dissected, and coded—a process that's often more complicated than it appears. For example, some aspects of a golf swing, such as the interaction between the wrist and elbow, may be hard to describe in detail. These subtle movements are much easier to demonstrate than to explain.

In recent years, researchers have made strides in teaching robots to replicate human actions. This process, known as imitation learning or learning from demonstration (LfD), involves equipping the machines with an array of wide-angle and zoom cameras. These cameras allow the robot to "observe" a human demonstrating a task. Learning algorithms then process the data to generate a mathematical map that translates visual cues into actions. However, robots must also be able to disregard irrelevant behaviors, such as scratching an itch, and address issues related to anatomical differences between humans and robots.

6: Practice Deception

Squirrels have mastered the art of deception, prompting researchers to study these clever creatures for inspiration on how to teach robots deceptive behaviors.

John Foxx/Stockbyte/Thinkstock

Squirrels have mastered the art of deception, prompting researchers to study these clever creatures for inspiration on how to teach robots deceptive behaviors.

John Foxx/Stockbyte/ThinkstockThe intricate skill of deception has evolved to help animals outsmart rivals and evade predators. With enough practice, it becomes a powerful survival tool.

For robots, learning how to deceive humans or other robots presents a challenge (and you may not mind that). Deception requires imagination—the ability to create mental images or ideas of things that aren't immediately perceptible through the senses—something machines typically lack. While robots excel at processing raw input from cameras, sensors, and scanners, they struggle to form abstract concepts beyond that sensory data.

Future robots might become more adept at deception. Researchers at Georgia Tech have successfully taught robots some of the trickery used by squirrels. First, they studied these clever rodents, who protect their food stashes by leading other animals to false caches. They then programmed these behaviors into their robots. The robots were able to use algorithms to determine when deception could be useful and, if necessary, mislead another robot by sending it to a decoy location.

5: Anticipate Human Actions

For robots to spend meaningful time with humans, like the humanoid known as ROBOY, they must get better at predicting human behavior, especially since humans can be quite unpredictable.

© Erik Tham/Corbis

For robots to spend meaningful time with humans, like the humanoid known as ROBOY, they must get better at predicting human behavior, especially since humans can be quite unpredictable.

© Erik Tham/CorbisOn the animated show "The Jetsons," Rosie the robot maid was more than just a domestic helper; she could hold conversations, cook, clean, and cater to the needs of the Jetson family. In one episode, Mr. Spacely, George's boss, visits for dinner. After the meal, Mr. Spacely reaches for a cigar, prompting Rosie to quickly offer him a lighter. This simple response showcases Rosie's ability to anticipate human behavior, a complex skill based on predicting what comes next from a sequence of actions.

Like deception, anticipating human behavior requires a robot to envision a future scenario. The robot must be able to predict that if a human does X, it is likely that Y will follow. This has been a difficult task for robots, but progress is being made. At Cornell University, a team is working on an autonomous robot capable of reacting to how a person interacts with objects in a room. The robot uses 3-D cameras to capture the environment, then isolates key objects from the background. By analyzing past data, it predicts what might happen next based on the human's movements and interactions, adjusting its actions accordingly.

Although the Cornell robots still make mistakes from time to time, they continue to improve as camera technology advances.

4: Coordinate Activities With Another Robot

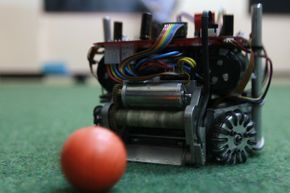

A close-up view of a member from Columbia's robot soccer team. One of the RoboCup Soccer leagues involves several fully autonomous robots collaborating to play soccer, while another league showcases humanoid robots in action!

© John Vizcaino/Reuters/Corbis

A close-up view of a member from Columbia's robot soccer team. One of the RoboCup Soccer leagues involves several fully autonomous robots collaborating to play soccer, while another league showcases humanoid robots in action!

© John Vizcaino/Reuters/CorbisCreating a large, single machine—an android—requires substantial investments of time, effort, and finances. Another solution involves deploying numerous smaller, simpler robots that work together to accomplish more intricate tasks.

This approach presents its own set of challenges. A robot working as part of a team must accurately position itself in relation to its teammates and communicate effectively with both other machines and human operators. To overcome these obstacles, scientists have turned to nature, observing insects that demonstrate complex swarming behaviors to find food and perform tasks that benefit the entire colony. For instance, by studying ants, researchers have learned that these creatures use pheromones to communicate with each other.

Robots are able to use a similar "pheromone logic" as some animals, but instead of chemicals, they communicate using light. Here's how it works: A group of small robots is scattered within a confined space. Initially, they wander around randomly until one of them encounters a light trail left behind by another. The bot follows the trail and adds its own light to it, which strengthens the path. As the trail becomes more noticeable, additional robots are drawn to it and follow. Some researchers have even found success using sound signals, such as chirps, to guide bots, ensuring they stay close to each other or directing them to areas of interest.

3: Make Copies of Itself

A hydra demonstrates its ability to self-replicate, a trait that many roboticists would like to incorporate into their machines.

luismmolina/iStock/Thinkstock

A hydra demonstrates its ability to self-replicate, a trait that many roboticists would like to incorporate into their machines.

luismmolina/iStock/Thinkstock"God told Adam and Eve, 'Be fruitful and multiply, and replenish the earth.'" But a robot given the same directive would likely be confused or frustrated. Why? Because self-replication is a challenging concept. While building a robot is one thing, creating one that can replicate itself or regenerate missing or damaged parts is an entirely different feat.

Interestingly, robots might not look to humans for examples of reproduction. After all, humans don't exactly split in two. However, some animals do. For instance, hydra, relatives of jellyfish, reproduce asexually through a process called budding. In this method, a small sac forms on the parent's body, swells up, and eventually detaches to become a new, genetically identical organism.

Researchers are developing robots capable of performing basic cloning functions. These robots are typically composed of modular units, often cubes, which contain identical components and programming for self-replication. Each cube has magnets on its surfaces, allowing it to connect and detach from others nearby. The cubes are also split diagonally, with each half able to rotate independently. A complete robot is made up of several cubes arranged in a specific pattern. If a sufficient number of cubes are available, a single robot can disassemble part of itself, using the pieces to create a new robot, then replenish the supply of cubes until two fully functional robots are standing side by side.

2: Act Based on Ethical Principle

If we design autonomous, lethal robots that can operate without human control, how would we program them to understand ethics?

© Fang Zhe/Xinhua Press/Corbis

If we design autonomous, lethal robots that can operate without human control, how would we program them to understand ethics?

© Fang Zhe/Xinhua Press/CorbisThroughout our day, we make numerous decisions, constantly weighing our actions against moral considerations: what's right or wrong, what's fair or unfair. If robots are to mimic human behavior, they'll need to grasp the concept of ethics.

Like language, programming ethical behavior is a monumental task, largely due to the absence of a universally accepted set of ethical principles. Every culture has its own guidelines for conduct and different systems of law. Within cultures, regional variations further influence how actions are judged and measured. Writing a globally applicable ethics guide for robots to learn from would be nearly impossible.

Researchers have recently managed to develop ethical robots by narrowing the scope of the problem. For instance, a robot placed in a specific environment, like a kitchen or a patient's room in a care facility, would have fewer ethical guidelines to follow and would likely succeed in making ethically sound decisions. To achieve this, engineers input scenarios involving ethical choices into a machine-learning algorithm. These scenarios are evaluated using three criteria on a sliding scale: the potential good of an action, the amount of harm it could prevent, and fairness. The algorithm then generates an ethical principle that the robot can apply to its decision-making. With this type of artificial intelligence, future household robots will be able to figure out who in the family should handle the dishes and who controls the TV remote for the evening.

1: Feel Emotions

In addition to his emotional abilities, Nao knows exactly how to unwind.

© Gerd Roth/dpa/Corbis

In addition to his emotional abilities, Nao knows exactly how to unwind.

© Gerd Roth/dpa/Corbis"The best and most beautiful things in the world cannot be seen or even touched. They must be felt with the heart." If Helen Keller's insight holds true, robots would sadly be missing out on life's greatest treasures. While robots are skilled at perceiving the world around them, they can't transform this sensory information into genuine emotions. They can't experience joy when they see a loved one's smile, nor can they feel fear when confronted with the scowl of a stranger.

This is perhaps the biggest divide between humans and machines. How could you teach a robot to fall in love? How would you program it to feel frustration, disgust, amazement, or sympathy? And is it even worth attempting?

Some researchers believe that future robots will incorporate cognitive emotion systems, allowing them to function more efficiently, learn at a faster pace, and engage more effectively with humans. Amazingly, prototypes already exist that demonstrate a limited range of human emotions. Nao, a robot developed by a European research team, exhibits affective behaviors similar to a 1-year-old child. It can express emotions such as happiness, anger, fear, and pride by combining specific postures with gestures. These emotional displays, inspired by studies of chimpanzees and human infants, are programmed into Nao. However, the robot determines which emotion to display based on its interactions with the surrounding people and objects. In the near future, robots like Nao are likely to be employed in various environments—such as hospitals, homes, and schools—where they can offer assistance and a sympathetic presence.