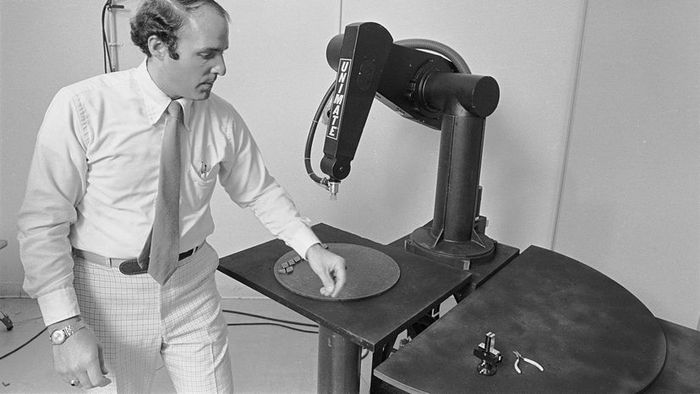

The tragic incident occurred when a robotic arm, used for retrieving parts in a Michigan automotive plant, caused a fatal accident. While the arm displayed here is not the same as the industrial one involved, it is the Programmable Universal Machine for Assembly, or PUMA. (Image credit: Bettmann/Getty Images)

The tragic incident occurred when a robotic arm, used for retrieving parts in a Michigan automotive plant, caused a fatal accident. While the arm displayed here is not the same as the industrial one involved, it is the Programmable Universal Machine for Assembly, or PUMA. (Image credit: Bettmann/Getty Images)On January 25, 1979, Robert Williams, a 25-year-old worker at the Ford Motor Company casting plant in Flat Rock, Michigan, was tasked with manually counting parts stored on a towering five-story shelf. The robotic system, designed to retrieve the parts, was malfunctioning, and it was Williams' responsibility to climb up and verify the accurate count.

While Williams performed his task, the robotic arm, which was also retrieving parts, continued its work. Unnoticed, the robot approached Williams, striking him in the head and killing him instantly. The machine carried on with its task, while Williams' body lay undiscovered for 30 minutes until co-workers, alarmed, found him. This event was reported by Knight-Ridder.

On that cold winter day, Williams became the first human in history to die at the hands of a robot.

The death, however, was purely accidental. There were no safety measures in place to protect Williams. No alarms warned him about the approaching arm, and there was no technology to stop the robot’s actions when a human was nearby. In 1979, the artificial intelligence used wasn't advanced enough to prevent such a tragedy. A jury concluded that the robot's design had insufficient precautions to prevent this kind of fatality. Williams' family was awarded $10 million in a wrongful death lawsuit against Unit Handling Systems, a division of Litton Industries, the robot's manufacturer.

The next fatality involving a robot occurred a little over two years later, in Japan, under similar circumstances: a robotic arm again failed to detect a worker, 37-year-old Kenji Urada, and inadvertently caused his death.

In the years that followed, roboticists, computer scientists, and artificial intelligence experts have continued to grapple with how to ensure robots can safely interact with humans without causing harm.

Decades later, deaths caused by robots or artificial intelligence have become more frequent news. Uber and Tesla have both made headlines with incidents involving their autonomous and self-driving cars, resulting in passenger deaths or pedestrians being struck. While numerous safety measures are now in place, the problem persists.

None of these fatalities are the result of a robot's conscious intent; rather, they are all unfortunate accidents. Yet, there is a growing concern, fueled by movies and science fiction tales like the "Terminator" and "Matrix" franchises, that artificial intelligences might one day develop a will of their own, potentially leading to harm against humans.

Shimon Whiteson, associate professor in the computer science department at the University of Oxford and co-founder of Morpheus Labs, refers to this fear as the "anthropomorphic fallacy." He describes the fallacy as "the belief that any system with human-like intelligence must also have human-like desires, such as a will to survive, be free, or possess dignity. There is no reason to believe this would be true, as these systems will only have the desires we program into them."

Whiteson argues that a far greater existential risk lies in the misalignment of values, where a machine's actions diverge from the programmer's true intent. He asks, "How can we ensure that the actions of an intelligent system align with our real values? The gap between intention and execution becomes increasingly significant as the system becomes more intelligent and autonomous."

Instead, Whiteson warns that the true danger lies in scientists deliberately designing robots capable of killing humans without any human intervention, specifically for military purposes. This is why AI and robotics researchers worldwide published an open letter calling for a global ban on such technologies. In response, the United Nations is convening again in 2018 to discuss regulations for so-called "killer robots." These robots wouldn't need to develop independent desires to kill; they could simply be programmed to do so.

The Guardian reports that 381 semi-autonomous military robots are either already deployed or under development in a dozen countries, including China, France, Israel, the U.K., and the U.S. Additionally, fully automated systems are already in use, though they lack full autonomy in their actions.