Many are deterred by the complicated symbols and rigid rules of mathematics, often abandoning a problem the moment they encounter a mix of numbers and variables. While math can be challenging and dense, the results it reveals can sometimes be striking, mind-blowing, or entirely unexpected. Discoveries such as these:

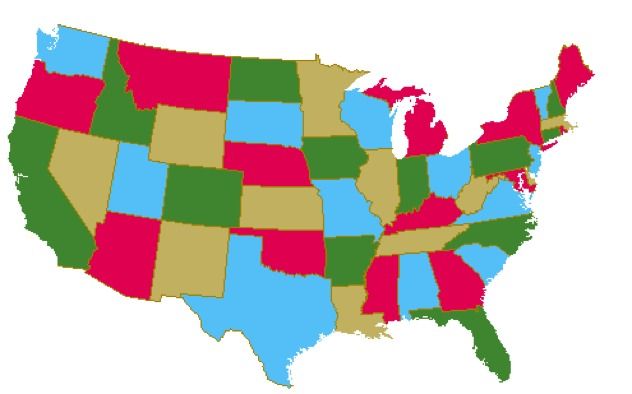

10. The 4-Color Theorem

In 1852, Francis Guthrie made a groundbreaking discovery while attempting to color a map of England's counties. This was long before the advent of the internet and the typical distractions. Guthrie found something intriguing—he only required four colors to ensure no adjacent counties shared the same color. He then posed the question of whether this rule applied to any map, sparking a mathematical curiosity that remained unsolved for many years.

In 1976, over a century after the problem was first posed, Kenneth Appel and Wolfgang Haken finally solved it. Their proof was intricate and involved the use of a computer, but it concluded that any political map (for example, a map of the United States) requires no more than four colors to ensure that no adjacent regions share the same color.

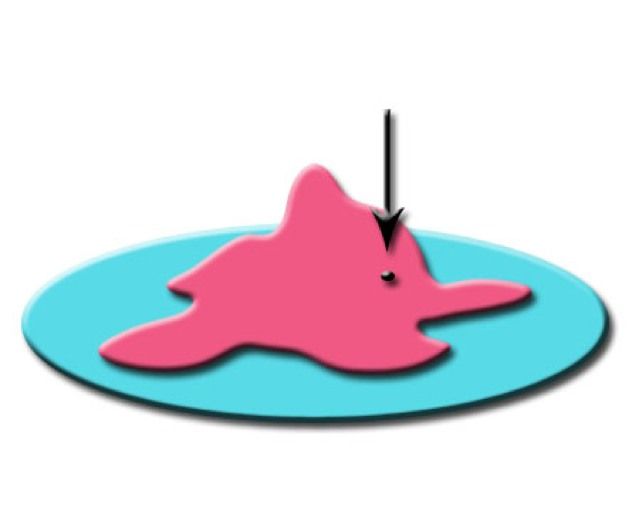

9. Brouwer’s Fixed Point Theorem

This theorem is part of a mathematical field called Topology and was discovered by Luitzen Brouwer. Although the theorem’s formal expression is highly abstract, its real-world applications are intriguing. Imagine having a picture, such as the Mona Lisa, and making a copy of it. You can alter the copy in any way—resize it, rotate it, crumple it—but Brouwer's Fixed Point Theorem states that when you place this altered copy on top of the original, there will be at least one point on the copy that directly coincides with the same point on the original. This could be a part of Mona’s eye, ear, or perhaps her smile, but it is guaranteed to be there.

The theorem also holds in three dimensions: picture a glass of water. If you stir the water with a spoon, no matter how much you agitate it, Brouwer’s theorem ensures that at least one water molecule will return to the exact position it occupied before you started stirring.

8. Russell’s Paradox

At the beginning of the 20th century, many mathematicians became fascinated by a new area of math known as Set Theory (which we will touch on later in this list). Simply put, a set is a collection of objects. The prevailing belief was that virtually anything could be categorized as a set: the set of all fruits or the set of all U.S. Presidents were both considered valid examples. Furthermore, sets could contain other sets (for example, the set of all sets mentioned earlier). However, in 1901, the eminent mathematician Bertrand Russell made a startling discovery that challenged this thinking—specifically, that not everything could be considered a set.

Russell then proposed a thought experiment involving a set that includes all sets that do not contain themselves. For example, the set of all fruits doesn’t contain itself (debate continues on whether tomatoes are fruits), so it can be part of Russell’s set, along with many others. But what happens with Russell’s own set? Since it doesn’t contain itself, it should be included in the set, but if it is included, it now contains itself, meaning it must be excluded. This paradox creates an endless loop of inclusion and exclusion. This paradox led to a complete rethinking of Set Theory, one of the most crucial branches of mathematics today.

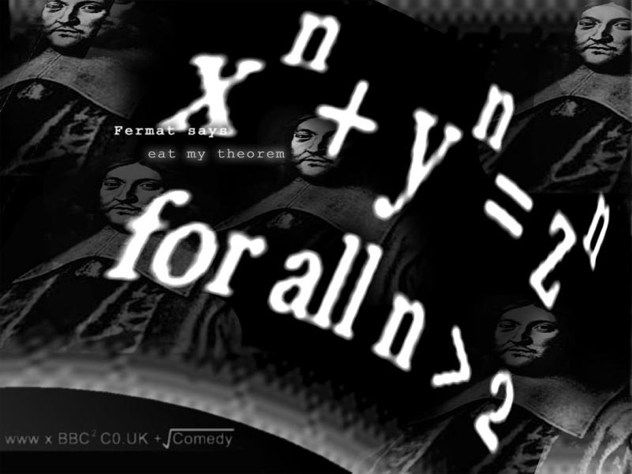

7. Fermat’s Last Theorem

Do you remember Pythagoras’ theorem from school? It deals with right-angled triangles and states that the sum of the squares of the two shorter sides is equal to the square of the longest side (x squared + y squared = z squared). Pierre de Fermat’s most famous theorem asserts that this same equation does not hold if you replace the squares with any number greater than 2 (for example, you could not say x cubed + y cubed = z cubed), provided x, y, and z are positive integers.

As Fermat himself wrote: “I have discovered a truly marvelous proof of this, which this margin is too narrow to contain.” Too bad, because although Fermat posed the problem in 1637, it remained unsolved for many years. In fact, it wasn’t until 1995—358 years later—that a mathematician named Andrew Wiles proved it.

6. The Doomsday Argument

It’s reasonable to assume that most readers of this article are human beings. As humans, this entry might hit home a bit more: mathematics can be used to predict when our species might die out. At least, that's what probability suggests.

The Doomsday Argument (which has been around for about 30 years and has been rediscovered multiple times) essentially predicts that humanity’s days may be numbered. One version of this argument, proposed by astrophysicist J. Richard Gott, is surprisingly straightforward: if we treat the human species' entire existence as a timeline from birth to death, we can figure out where we stand on that timeline.

Since the current moment is just a random point in the history of humanity, we can say with 95% confidence that we are somewhere within the middle 95% of that timeline. If we estimate that humanity is exactly 2.5% into its existence, we end up with the longest possible lifespan. Conversely, if we estimate we’re 97.5% through, it results in the shortest possible lifespan. This approach gives us a range of possible outcomes for the human race’s expected longevity. According to Gott, there's a 95% chance that humanity will cease to exist anywhere between 5100 years and 7.8 million years from now. So, humanity, better start ticking off those bucket list items.

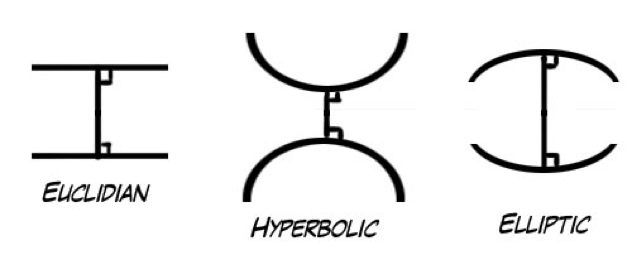

5. Non-Euclidean Geometry

You might remember geometry from school, a branch of mathematics where sketching shapes and lines was part of the fun. The most familiar kind is Euclidean geometry, which is based on five basic, self-evident principles, or axioms. It deals with the geometry of points and lines that we can easily draw on a blackboard. For a long time, Euclidean geometry was seen as the only legitimate form of geometry.

However, the so-called self-evident truths that Euclid established more than 2000 years ago weren’t so self-evident to everyone. One particular axiom, known as the parallel postulate, didn’t sit well with many mathematicians. For centuries, various thinkers tried to find a way to make it fit with the other axioms. In the early 18th century, a bold new idea emerged: instead of rejecting the system, the fifth axiom was simply altered. This led to the creation of a new form of geometry called hyperbolic (or Bolyai-Lobachevskian) geometry, which completely shifted the scientific worldview and paved the way for many forms of non-Euclidean geometry. One major branch that emerged from this is Riemannian geometry, which plays a crucial role in Einstein’s Theory of Relativity (interestingly, our universe doesn’t follow Euclidean geometry!).

4. Euler’s Formula

Euler’s Formula is one of the most influential equations in mathematics, and its brilliance comes from one of history’s greatest mathematicians, Leonhard Euler. Over the course of his life, Euler published over 800 papers, many of them written while he was blind.

At first glance, Euler’s Formula might seem deceptively simple: e^(i*pi) + 1 = 0. For those unfamiliar, e and pi are constants that show up in numerous unexpected contexts, while i represents the imaginary unit, the number equal to the square root of -1. The beauty of Euler’s Formula lies in its ability to merge five of the most important constants in mathematics (e, i, pi, 0, and 1) into a single, elegant equation. Physicist Richard Feynman referred to it as 'the most remarkable formula in mathematics,' and its significance lies in how it ties together multiple branches of math.

3. Gödel’s Incompleteness Theorems

In 1931, Austrian mathematician Kurt Gödel shook the foundations of mathematics with two groundbreaking theorems. Together, these theorems proved a rather unsettling reality: math is inherently incomplete and always will be.

Without delving into the complex details, Gödel proved that within any formal system (like the system of natural numbers), there are true statements that cannot be proven within that system itself. In essence, he demonstrated that no axiomatic system can ever be fully self-contained, challenging all previous mathematical assumptions. There will never be a comprehensive, closed system that encapsulates all of mathematics—only systems that continuously expand as we attempt to make them complete.

2. Different Levels of Infinity

Infinity is already a challenging concept to grasp. Humans were not built to fully comprehend the idea of the infinite, which is why mathematicians have always approached Infinity with great care. It wasn’t until the second half of the 19th century that Georg Cantor introduced Set Theory (remember Russell’s paradox?), a field of mathematics that enabled him to explore the true nature of Infinity. What he uncovered was nothing short of astounding.

It turns out that every time we think we've reached Infinity, there's always a larger kind of infinity beyond it. The smallest level of infinity is the set of whole numbers (1, 2, 3…), which is called a countable infinity. Using brilliant reasoning, Cantor showed that there exists a second level of infinity: the infinity of all Real Numbers (such as 1, 1.001, 4.1516… essentially any number you can think of). This type of infinity is uncountable, meaning that even if you had infinite time, you couldn’t list all the Real Numbers without skipping some. But that’s not all—there are infinitely many more levels of uncountable infinity. How many? An infinite number, naturally.

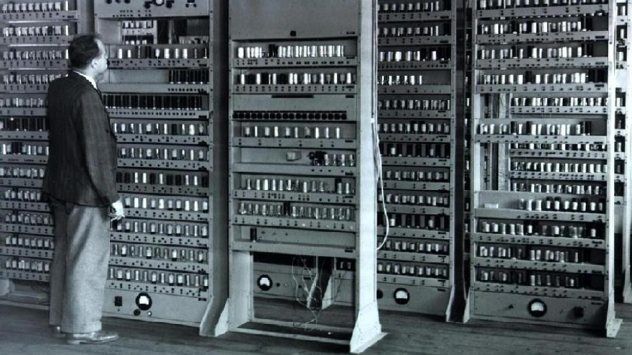

1. Turing’s Universal Machine

We live in a world dominated by computers. As you read this list, you’re engaging with a computer! It's clear that computers are among the most significant inventions of the 20th century, but what might surprise you is that the very foundation of computers lies in the world of theoretical mathematics.

Mathematician (and World War II codebreaker) Alan Turing introduced a conceptual device known as the Turing Machine. This machine functions like a basic computer: it utilizes an infinite tape with three symbols (for example, 0, 1, and a blank space), following a set of instructions. These instructions could involve changing a 0 to a 1 and moving left, or filling a blank and moving right. Through these simple operations, a Turing Machine could carry out any well-defined task.

Turing expanded on this idea to create the concept of a Universal Turing Machine, a machine capable of simulating any Turing Machine with any given input. This concept is essentially the foundation of a stored-program computer. With just math and logic, Turing laid the groundwork for computing science long before the practical technology to build a real computer was even conceivable.