It’s undeniable: artificial intelligence is transforming our world. But are these transformations beneficial? In recent years, deepfakes have surged in popularity. Advanced algorithms enable users to alter photos and videos, seamlessly swapping one person’s face with another.

Deepfake technology has found a wide array of applications, many of which are positive. For instance, Star Wars enthusiasts have edited scenes from the 2018 film Solo, making lead actor Alden Ehrenreich look more like a young Harrison Ford. Just last Christmas, the UK’s Channel 4 aired a digitally modified version of the Queen’s speech, where she delivered witty remarks about the year’s events and even danced on her desk for a festive touch.

However, despite its advancements, deepfake AI harbors a darker side. Disgruntled individuals can now create fake revenge porn with minimal effort. Politicians are leveraging deepfakes to fuel the spread of fake news and misinformation. Meanwhile, cybercriminals have exploited these algorithms to defraud business executives of vast sums. Here are ten of the most bizarre and alarming examples of this manipulative technology in action.

10. A Jealous Mother Creates Explicit Images of Her Daughter’s Cheerleading Competitors

Teenagers often find their parents difficult, but Raffaela Spone took it to another level. This Pennsylvania mom fabricated compromising photos of her daughter’s cheerleading competitors in a spiteful bid to have them removed from the team. By sourcing images from social media, she produced fake content depicting Victory Vipers members in compromising situations, such as being naked, drinking, and smoking.

Spone’s scheme unraveled when several families of the targeted girls reported the incidents to the police. They mentioned receiving threatening texts from an unidentified number. Authorities traced the number to a phone sales website, which led them to an IP address exposing Spone as the culprit. Investigators believe her daughter was unaware of her mother’s alleged misconduct.

Spone now faces charges for harassment and sending abusive messages to team members, their families, and the gym’s owners.

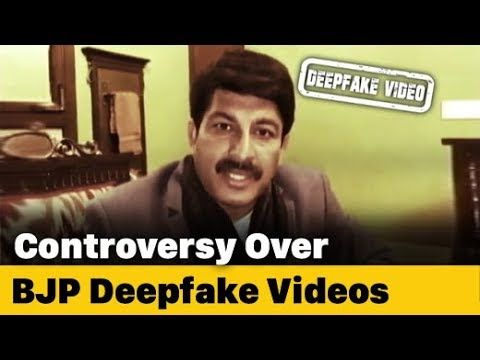

9. Deepfakes in the Delhi Elections

Politicians are no strangers to deceit, and in the age of fake news and post-truth politics, one candidate resorted to deepfakes to gain an edge in their election campaign.

As Delhi residents geared up for the 2020 elections, two viral videos began spreading on WhatsApp. These clips seemingly featured a prominent politician criticizing the Delhi administration. BJP leader Manoj Tiwari was shown denouncing his opponent, Arvind Kejriwal, in two languages—English and the widely spoken Haryanvi dialect. However, only one of the videos was authentic.

Tiwari collaborated with a communications firm to produce politically charged deepfakes. He delivered a brief speech in English, which was then altered by tech specialists to make it appear as though he was speaking Haryanvi. The manipulated video reached approximately 15 million viewers, leaving many feeling misled.

Following the incident, experts cautioned that society might be heading toward a future where online content becomes entirely untrustworthy. The rampant spread of fake news in India has already been dubbed a “public health crisis.”

8. Tom Cruise’s TikTok Deepfake Saga

In 2021, a deepfake video surfaced on TikTok, depicting Tom Cruise tripping in his luxurious home. Additional clips emerged, showing the Hollywood star playing golf, enjoying a lollipop, and performing a magic trick.

None of the videos were genuine. Thankfully, there was no deception involved. The account behind the clips, DeepTomCruise, was transparent about their fabricated nature. However, the footage appeared so convincing that some users doubted its falsity. A few even theorized that it was the real Tom Cruise pretending to be a deepfake as part of an elaborate social media prank.

As of now, the DeepTomCruise account has significantly more followers than Cruise’s official account, despite the actor never posting any videos. While the real Tom Cruise has around 35,000 followers on TikTok, the deepfake account has amassed nearly a million.

7. Cybercriminals Swindle $240,000 from a CEO

In films, heists are often depicted as thrilling adventures filled with risky maneuvers and dramatic stunts. However, with the advent of deepfake technology, cybercriminals can now steal vast sums of money with just a phone call.

In March 2019, scammers contacted the CEO of a UK-based energy firm, impersonating his German superior. They used AI to replicate the boss’s subtle German accent during the call. The CEO, unaware of the scam, was instructed to transfer $240,000 to a Hungarian supplier for an urgent transaction. It wasn’t until they requested a second payment that he grew suspicious and realized the fraud.

Experts suggested that this incident might mark the first known use of artificial intelligence in a criminal heist. Previous occurrences either went unreported or unnoticed. However, tech specialists have long anticipated the risks of AI-driven attacks. Such scams represent a growing threat to businesses, prompting cybersecurity companies to develop advanced tools to combat deepfake-related fraud.

6. Avatarify: The Deepfake Zoom Filter

Video conferencing platforms like Zoom and Skype have surged in popularity during the pandemic. However, the endless stream of virtual meetings can become monotonous. To inject some fun, developers introduced a deepfake filter for video calls. Avatarify enables users to adopt another person’s appearance by overlaying their face with someone else’s. Its creator, Ali Aliev, once amused his colleagues by impersonating Elon Musk during a work Zoom call.

While Avatarify is innovative, it isn’t entirely convincing, especially since it doesn’t alter the user’s voice. However, as technology advances, this could change. Several companies are making strides in creating realistic audio deepfakes. In the near future, it might be possible to convincingly pose as a celebrity during a Zoom call, raising serious concerns for those being impersonated.

5. The Drunk Nancy Pelosi Video

In May 2019, a manipulated video of Nancy Pelosi spread rapidly online. The footage, altered using AI, depicted the House Speaker slurring her words as if intoxicated during a formal event. She was shown accusing the former US president of a “cover-up” at a Center for American Progress gathering, with her speech noticeably slowed down.

Rudy Giuliani, President Trump’s lawyer and former New York Mayor, was among those who shared the video on social media. The clip garnered millions of views. While the origin of the deepfake remains unknown, it emerged during a wave of attempts to discredit Pelosi as mentally unfit or struggling with alcohol. Just days prior, the president had shared another video allegedly showing Pelosi fumbling her words during a speech.

4. Mumbai Student Alters Teen Girl’s Face into Explicit Video

In 2019, Mumbai police arrested a 20-year-old college student for blackmailing a teenage girl with fabricated pornographic content. The student had superimposed her face into an explicit video and later contacted her via an anonymous Instagram account, threatening to release it online. The girl was coerced into sending the video to another user. Reports indicate he targeted two other young women in a similar manner.

The victim reported the incident to the Lokmanya Tilak Marg Police Station, leading to the perpetrator’s identification and arrest.

Yet another reason why social media is toxic.

3. Non-consensual Explicit Content

Non-consensual explicit material is the most prevalent misuse of deepfake technology. This trend first surfaced on Reddit a few years ago, where users would take adult videos and swap the performer’s face with that of a celebrity, predominantly female. Many were stunned to discover that these videos weren’t produced by high-end studios but rather by accessible machine learning tools online. Early victims of this practice included Gal Gadot, Scarlett Johansson, Taylor Swift, and Maisie Williams.

Since then, deepfake pornography has advanced, with developers incorporating 3D digital avatars. Now, creators can produce realistic models, program them to perform sexual acts, and superimpose the faces of individuals like Margot Robbie or Emilia Clarke. This allows anyone to manipulate a 3D avatar into any position and later map a real person’s face onto it.

In 2019, developers released the app DeepNude, which used neural networks to generate nude images from photos of clothed women. Users could upload an image, and the AI would strip away the clothing. However, the app was short-lived. Just days after its launch, its anonymous creator, “Alberto,” shut it down.

As expected, the rise of deepfake pornography has sparked numerous ethical concerns. Danielle Citron, who testified before Congress about the dangers of AI-generated explicit content, described the technology as a “violation of sexual privacy.”

Carrie Goldberg, a lawyer specializing in revenge porn cases, shares this criticism. She told reporters, “Anyone claiming the internet isn’t real life or that virtual actions are harmless is making excuses for unethical behavior. While creating a bot to simulate assault in VR isn’t the same as a physical attack, both are equally wrong and immoral.”

2. Cybercriminals’ Failed Attempt to Defraud a Tech Firm

In June 2020, cybercriminals attempted to deceive a tech company employee using audio deepfakes. The employee received a suspicious voicemail from someone mimicking the CEO’s voice, requesting “urgent help to close a critical business deal.”

Luckily, the employee sensed something was amiss and didn’t fall for the scam. Investigators later confirmed the voicemail was an AI-generated deepfake. The voice lacked natural speech patterns and background noise, revealing it as fake. The perpetrators remain unidentified.

On the flip side, deepfake audio can also be used for entertainment, as seen in the video clip above.

1. British Activists Targeted by a Fake Journalist

Two Palestinian rights activists were stunned to discover they had been labeled as terrorist sympathizers. The accusation came from a supposed British journalist named Oliver Taylor. Online profiles described Taylor as a politically engaged university student with a Jewish background, focusing on issues related to Israel and anti-Semitism. His work had reportedly been published in the Jerusalem Post and the Times of Israel.

In an article for The Algemeiner, a Jewish newspaper, Taylor accused married couple Mazen Masri and Ryvka Barnard of being “known terrorist sympathizers.” Masri, a lecturer at City Law School in London, had previously sued Israeli tech firm NSO for its involvement in a Mexican phone-hacking scandal. The couple was baffled by the sudden accusations and questioned why a student would target them.

The twist? Oliver Taylor doesn’t exist. His alleged university has no record of him, and forensic analysis showed his profile picture was a deepfake. The true identity of the writer remains unknown, and the fake images are untraceable. The Algemeiner stated that Taylor contacted them via email and never requested payment. Both The Algemeiner and the Times of Israel removed his articles, but some remain online, including those published in The Jerusalem Post and Arutz Sheva.