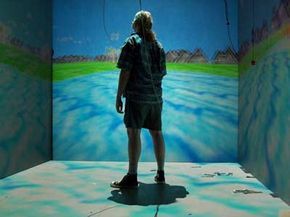

A CAVE display for virtual reality projects images across the floor, walls, and ceiling to create a fully immersive experience. Explore more virtual reality visuals.

Image courtesy of Dave Pape

A CAVE display for virtual reality projects images across the floor, walls, and ceiling to create a fully immersive experience. Explore more virtual reality visuals.

Image courtesy of Dave PapeWhat comes to mind when you hear the term virtual reality (VR)? Do you picture someone in a bulky helmet connected to a computer with a thick cable? Do poorly-rendered pterodactyls appear in your imagination? Does the idea bring to mind Neo and Morpheus navigating the Matrix? Or do you cringe at the term, hoping it fades away?

If you fall into the latter category, you're probably a computer scientist or engineer. Many professionals in these fields now shy away from the term 'virtual reality,' even while working on technologies we commonly associate with it. These days, 'virtual environment' (VE) is more frequently used to describe what the public recognizes as virtual reality. For the purposes of this article, we will use both terms interchangeably.

Despite the differences in terminology, the underlying idea remains unchanged – utilizing computer technology to generate a simulated, three-dimensional environment that a user can interact with and explore as if they were physically present in that space. Scientists, engineers, and theorists have developed numerous devices and applications to make this a reality. While opinions vary on what exactly qualifies as a genuine VR experience, generally it should incorporate the following elements:

- Three-dimensional images that seem life-sized from the user's viewpoint

- The ability to track the user's movements, particularly head and eye motion, and adjust the display to match the change in perspective accordingly

This article will explore the key features of virtual reality (VR), some of the technologies powering VR systems, various applications, potential concerns, and a brief overview of the field's history. Next, we will delve into expert definitions of virtual environments, beginning with the concept of immersion.

Virtual Reality Immersion

A VR system that enables the user to move freely in any direction

Image courtesy of VIRTUSPHERE

A VR system that enables the user to move freely in any direction

Image courtesy of VIRTUSPHEREIn a VR environment, a user experiences immersion, or the sensation of being fully inside and part of that world. The user can also interact with the environment in meaningful ways. When immersion and interactivity combine, it's referred to as telepresence. Computer scientist Jonathan Steuer defined telepresence as “the extent to which one feels present in the mediated environment, rather than in the immediate physical environment.” In other words, a successful VR experience makes you unaware of your physical surroundings and fully focused on your existence within the virtual world.

Jonathan Steuer identified two key aspects of immersion: depth of information and breadth of information. Depth of information refers to the quantity and quality of data conveyed to the user through sensory signals while interacting in a virtual space. For the user, this could involve factors like display resolution, environmental graphics complexity, and audio system quality. Steuer describes breadth of information as the “number of sensory dimensions presented at once.” An experience with high breadth of information engages all of your senses. While most virtual environments focus on visual and audio stimuli, many researchers and engineers are now exploring ways to add a sense of touch. Systems that provide tactile feedback and interaction are known as haptic systems.

For immersion to work, a user must be able to navigate through a life-sized virtual world, with perspectives shifting smoothly as they move. If the virtual environment consists of a lone pedestal in the center of a room, the user should be able to view it from any angle, and the viewpoint should adjust naturally based on where the user is looking. Dr. Frederick Brooks, a pioneer in VR technology and theory, asserts that displays need to deliver a frame rate of at least 20 - 30 frames per second to provide a realistic experience.

Virtual reality has been referred to by various other names aside from virtual environments. Other terms for VR include cyberspace (coined by science fiction writer William Gibson), artificial reality, augmented reality, and telepresence.

The Virtual Reality Environment

The sensory output of a virtual environment must adjust dynamically as the user explores. If the system includes 3-D sound, it should shift its orientation naturally as the user moves. For a true sense of immersion, sensory feedback must be consistent. For example, in a perfectly still environment, a user wouldn’t expect to feel intense winds. Likewise, if the environment places you in the middle of a hurricane, you shouldn’t feel a light breeze or perceive the scent of roses.

The delay between a user's action and its reflection in the virtual environment is known as latency. Latency typically refers to the gap between when a user moves their head or eyes and when the viewpoint changes, but it can also apply to delays in other sensory outputs. Research with flight simulators shows that humans can detect latency greater than 50 milliseconds. When latency is noticeable, it disrupts the sense of immersion and makes the user aware of the artificial nature of the environment.

An immersive experience is compromised if the user becomes conscious of their real surroundings. Truly immersive experiences make the user forget the physical world, causing the computer to feel non-existent. To achieve full immersion, developers need to create more natural input methods for users. As long as a user is aware of the device used for interaction, true immersion cannot be achieved.

Next, we will explore the other aspect of telepresence: interactivity.

Passive haptics are one approach VE developers use to enhance interactivity. Passive haptics involve real physical objects in a space being mapped to virtual counterparts in the virtual environment. Users wear an HMD or similar portable display in the physical space. When they look at a physical object, they see its virtual counterpart in their display. When they approach the object and attempt to touch it, they feel the real object. Any actions the user takes with the real object translate to the virtual one within the virtual space.

In VR systems, swimming doesn’t refer to entering a pool but to the effect of latency within the virtual space. If you turn your head in a VE and notice the viewpoint doesn’t change immediately, you are experiencing swimming. This can be distracting and may cause motion sickness, also known as simsickness or cybersickness in VR circles.

Virtual Reality Interactivity

DisneyQuest’s Cyber Space Mountain Capsule

Photo courtesy of Sue Holland

DisneyQuest’s Cyber Space Mountain Capsule

Photo courtesy of Sue HollandWhile immersion in a virtual environment is essential, true involvement requires an element of interaction. Early virtual experiences using today's VE technologies allowed for a more passive form of engagement. Users would sit in a motion chair, wear a head-mounted display (HMD), and watch a pre-recorded film, all while being exposed to simulated stimuli like wind through air jets. Although immersive, these experiences lacked interactivity, limiting the user’s control to simply shifting their viewpoint. The user’s journey was predetermined and unchangeable.

Modern virtual roller coasters utilize similar technology to create interactive experiences. At DisneyQuest in Orlando, Florida, guests can design their own roller coaster and then ride their virtual creation in a simulator. While the experience is highly immersive, the lack of any interaction beyond the initial design phase means it doesn't qualify as a true virtual environment.

Interactivity in virtual environments relies on multiple factors. Steuer identifies three key factors: speed, range, and mapping. Speed refers to how quickly a user's actions are incorporated into the computer model and made perceivable. Range concerns how many possible outcomes can result from a user's specific actions. Mapping is the system’s ability to produce responses that feel natural and realistic in reaction to the user's input.

Navigating within a virtual environment is a form of interactivity. When a user can control their movement within the space, the experience becomes interactive. Most virtual environments include additional forms of interaction to prevent user boredom after just a few minutes. Computer Scientist Mary Whitton emphasizes that poorly executed interaction can severely diminish immersion, while engaging users more deeply can enhance it. When a virtual environment is stimulating and captivating, users are more likely to suspend their disbelief and become fully immersed in the experience.

True interactivity also means the ability to alter the virtual environment. A well-designed virtual world will react to the user’s actions in ways that make sense within that specific environment, even if those changes are not entirely logical in the real world. If the environment shifts in erratic and unexpected manners, it can disrupt the user's feeling of being truly present within the virtual space.

Next, we’ll explore the different types of hardware used in virtual environments.

Developers have found that users experience a stronger sense of telepresence when interactions are straightforward and enjoyable, even if the virtual environment is not highly realistic. On the other hand, highly detailed environments that offer little to no interactivity tend to cause users to lose interest much more quickly.

The Virtual Reality Headset

The Nintendo Power Glove, once a staple in virtual reality gaming, is pictured here.

Photo used under the

The Nintendo Power Glove, once a staple in virtual reality gaming, is pictured here.

Photo used under theToday, most virtual environment systems operate using standard personal computers. These PCs are powerful enough to develop and run the software required to generate virtual worlds. Graphics are typically processed by high-performance graphics cards, which were initially designed for the video gaming industry. The same graphics card that powers games like World of Warcraft is likely the one rendering visuals for advanced virtual environments.

Virtual environment systems require a way to present images to the user. Many of these systems use head-mounted displays (HMDs), which are headsets containing two monitors—one for each eye. These displays create a stereoscopic effect, offering a sense of depth. Early HMDs used cathode ray tube (CRT) monitors, which were bulky but provided high resolution and quality, or liquid crystal display (LCD) monitors, which were more affordable but lacked the quality of CRTs. Today, LCD technology has improved significantly in terms of resolution and color richness, making it more common than CRTs.

A data suit that enables user input for virtual environments.

Photo courtesy of Dave Pape

A data suit that enables user input for virtual environments.

Photo courtesy of Dave PapeSome virtual environment systems project images onto the walls, floor, and ceiling of a room and are known as Cave Automatic Virtual Environments (CAVE). The first CAVE display, designed by the University of Illinois-Chicago, used rear projection technology to display visuals on the walls, floor, and ceiling of a small room. Users can navigate a CAVE display while wearing special glasses that complete the illusion of moving through a virtual space. CAVE displays offer a wider field of view, enhancing immersion, and they allow multiple people to experience the environment simultaneously, though only one user’s perspective is tracked, with others acting as passive observers. These systems are costly and require more physical space than other types.

Tracking systems are closely linked to display technology. These systems monitor the orientation of the user’s viewpoint, ensuring the computer sends the correct images to the visual display. Most systems require users to be connected by cables to a processing unit, limiting their movement. However, developments in tracking technology tend to lag behind those in other virtual reality (VR) technologies, primarily because the demand for such systems is mostly driven by VR-specific applications. Without the broader needs of other industries, there's less incentive to develop innovative tracking solutions.

Input devices play a critical role in virtual reality (VR) systems. These devices range from simple controllers with a few buttons to advanced electronic gloves and voice recognition software. There is no universally accepted control system in the field, and VR researchers are constantly working to create more natural input methods to enhance the feeling of telepresence. Some of the most common types of input devices include:

- Joysticks

- Force balls/tracking balls

- Controller wands

- Datagloves

- Voice recognition

- Motion trackers/bodysuits

- Treadmills

Virtual Reality Games

The Wii controller in use

Photo courtesy

The Wii controller in use

Photo courtesyResearchers are also investigating the development of biosensors for use in virtual reality. A biosensor detects and interprets nerve and muscle activity, enabling a computer to track how a user moves in physical space and translate those movements into corresponding actions within a virtual environment. These biosensors can be attached directly to the user's skin or integrated into gloves or bodysuits. However, a challenge with biosensor suits is that they must be custom-tailored to each individual; otherwise, the sensors may not align properly with the user’s body.

Mary Whitton from UNC-Chapel Hill believes that the entertainment sector will be the primary driver behind the future development of virtual reality (VR) technology. In particular, the video game industry has significantly advanced graphics and sound technologies that can now be integrated into VR systems. One innovation that Whitton finds particularly fascinating is the Nintendo Wii's wand controller. This device is not only commercially available with some tracking capabilities, but it is also affordable and appeals to a broader audience, including people who don't typically engage with video games. Since tracking and input devices have traditionally lagged behind other VR technologies, this controller may mark the beginning of a new wave of technological advancements for VR systems.

Some developers envision the Internet evolving into a fully three-dimensional virtual space, where users can navigate virtual landscapes to access content and entertainment. Websites could transform into three-dimensional locations, allowing users to explore in a much more tangible way than ever before. To bring this vision to life, programmers have created several computer languages and web browsers, including:

- Virtual Reality Modeling Language (VRML)- the first three-dimensional modeling language designed for the Web.

- 3DML - a three-dimensional modeling language that allows users to visit a location (or website) through most browsers after installing a plug-in.

- X3D - the language that replaced VRML as the standard for creating virtual environments on the web.

- Collaborative Design Activity (COLLADA) - a format enabling file exchanges between three-dimensional programs.

However, many virtual environment experts would argue that without a head-mounted display (HMD), Internet-based systems don't qualify as true virtual environments. They miss essential elements of immersion, particularly in areas like tracking and providing life-size image displays.

Virtual Reality Applications

Using virtual therapy to address a patient's fear of flying.

Photo courtesy of Virtually Better, Inc.

Using virtual therapy to address a patient's fear of flying.

Photo courtesy of Virtually Better, Inc.Back in the early 1990s, the public’s introduction to virtual reality typically consisted of basic demos, where blocky characters were seen evading a crude pterodactyl on a primitive chessboard. While virtual reality continues to attract interest in entertainment, particularly in games and theatrical experiences, the truly fascinating applications of VR systems are emerging in other industries.

Architects now use virtual models to bring their building plans to life, allowing clients to ‘walk’ through structures before they’re even constructed. Clients can explore the interior and exterior, ask questions, or even propose modifications. Virtual models offer a much clearer sense of how it will feel to navigate the building compared to traditional miniature models.

Automakers have adopted virtual reality to create digital prototypes of new vehicles, allowing for thorough testing before producing any physical components. Designers can tweak the virtual model as needed without scrapping the entire creation, making the development process faster and more cost-effective.

Virtual environments play a crucial role in training programs across the military, space programs, and even in medical education. The military, in particular, has been a long-time advocate for VR technology. Training simulations range from vehicle operation to squad-level combat. Overall, VR-based training is not only safer but also more affordable in the long term compared to traditional methods. Soldiers who have undergone VR training have been found to perform just as effectively as those trained in conventional settings.

In the field of medicine, virtual environments are being leveraged to train staff on everything from complex surgical procedures to patient diagnostics. Surgeons have also embraced virtual reality to enhance both education and practice, using robotic devices to perform surgeries remotely. The first instance of robotic surgery took place in 1998 at a hospital in Paris. One of the main hurdles in using VR for robotic surgery is latency; even a slight delay can create an unnatural experience for the surgeon. Additionally, these systems must deliver highly accurate sensory feedback for optimal performance.

Virtual reality is also proving valuable in psychological therapy. Dr. Barbara Rothbaum from Emory University and Dr. Larry Hodges of the Georgia Institute of Technology were pioneers in using VR to treat individuals with phobias and other psychological conditions. They applied virtual environments as a type of exposure therapy, where patients are exposed to distressing stimuli under controlled circumstances. VR therapy has two major advantages over traditional exposure therapy: it's more convenient and patients are generally more open to it since they know they’re not dealing with real-world scenarios. Their work led to the creation of Virtually Better, a company that sells VR therapy systems to medical professionals across 14 countries.

Next, we’ll delve into some of the concerns and challenges surrounding virtual reality technology.

Virtual Reality Challenges and Concerns

Image courtesy NASA

Image courtesy NASAOne of the main obstacles in virtual reality development is improving tracking systems, creating more intuitive ways for users to engage within virtual environments, and reducing the time it takes to generate these virtual worlds. While some tracking system companies have been established since VR's early days, many others are short-lived and small-scale. Moreover, very few companies are dedicated to designing input devices specifically for VR. Developers often have to repurpose technology created for other industries and rely on the continued success of those companies. As for constructing virtual spaces, building a realistic environment can be time-consuming, with a team of developers potentially needing over a year to accurately replicate a real room.

Another hurdle for VE system developers is the challenge of ensuring good ergonomics. Many VR systems use hardware that either restricts a user's mobility or limits them through physical tethers. Poorly designed hardware can result in issues like balance problems, a reduced sense of immersion, or even cybersickness. Cybersickness symptoms can include disorientation and nausea, and it affects some people more than others. While some users can spend hours exploring virtual spaces without any issues, others may experience discomfort after just a few minutes.

Some psychologists are concerned about the psychological effects of immersion in virtual environments. Specifically, there’s concern that VR systems, especially those involving violence, could desensitize users, particularly if they take on the role of the aggressor. This has led to fears that VR entertainment could contribute to a generation of sociopaths. Others worry less about desensitization but more about the potential for addiction. There have been cases where gamers, deeply engaged in their virtual worlds, begin neglecting their real lives. As virtual environments become more engaging, the risk of addiction may increase.

An emerging issue involves virtual crimes. Determining what actions, like murder or sexual assault, constitute crimes in a virtual space is still a gray area. Authorities are uncertain at what point someone could be charged for actions that take place in a virtual environment. Research suggests that people can have real physical and emotional reactions to virtual stimuli, so it’s possible that victims of virtual attacks could experience genuine emotional trauma. This raises the question: can an attacker be held responsible for causing distress in the real world? We don’t have definitive answers yet.

In the following section, we will explore the history of virtual reality.

Mary Whitton explains that while advancements in technology and computing power are crucial, they don’t necessarily lead to faster production or smoother rendering of virtual environments. This is because developers often use increased computing resources to create more detailed and immersive worlds, which in turn requires more time to render.

Virtual Reality History

Photo credit: Atticus Graybill of Virtually Better, Inc.

Photo credit: Atticus Graybill of Virtually Better, Inc.The idea of virtual reality has existed for decades, though it only captured the public's attention in the early 1990s. Back in the mid-1950s, a cinematographer named Morton Heilig imagined a theater experience that would engage all of the audience's senses to make stories more immersive. In 1960, he developed a single-user console called the Sensorama, which featured a stereoscopic display, fans, odor emitters, stereo speakers, and a moving chair. He also invented a head-mounted television display, enabling users to watch 3-D television. Although users were passive viewers of films, many of Heilig’s ideas would later influence the development of virtual reality.

In 1961, engineers from Philco Corporation created the first head-mounted display (HMD), known as the Headsight. This device incorporated a video screen and a tracking system, which they connected to a closed-circuit camera system. Designed for high-risk scenarios, the HMD allowed users to remotely observe real-world environments and adjust the camera's angle by simply turning their heads. Bell Laboratories later adapted a similar HMD for use by helicopter pilots, linking it to infrared cameras mounted on helicopters, granting pilots clear vision even in darkness.

In 1965, computer scientist Ivan Sutherland introduced the concept of the “Ultimate Display,” where users could immerse themselves in a virtual world as real as their physical surroundings. This groundbreaking idea served as a cornerstone for most developments in virtual reality. Sutherland’s vision included the following elements:

- A virtual world that feels lifelike to anyone observing, viewed through an HMD and enhanced by 3D sound and tactile sensations

- A computer system that sustains the world model in real-time

- The ability for users to interact with virtual objects in an intuitive, realistic manner

In 1966, Sutherland developed an HMD that was connected to a computer system, which generated the graphics displayed on the device (prior HMDs had only been connected to cameras). Since the device was too heavy to be worn comfortably, a suspension system was used to hold it up. The HMD was capable of showing stereo images, creating a sense of depth, and could track the user’s head movements, adjusting the field of view as the user looked around.

Virtual Reality Development

A BOOM Display used by NASA to simulate space

Image courtesy of NASA

A BOOM Display used by NASA to simulate space

Image courtesy of NASAMuch of the research and development for virtual reality projects received funding from NASA, the Department of Defense, and the National Science Foundation. The CIA contributed $80,000 toward the research efforts of Ivan Sutherland. Initially, VR applications primarily focused on vehicle simulators, which were used in training programs. Since the flight experiences in simulators were similar, but not exactly like real flights, the military, NASA, and airlines introduced policies requiring pilots to wait at least a day between simulated and actual flights, to avoid any negative impact on their real-world performance.

For years, virtual reality technology stayed out of the public’s view, with the majority of development centered around vehicle simulations. That changed in 1984 when computer scientist Michael McGreevy began experimenting with VR technology to improve human-computer interface (HCI) design. HCI continues to play an essential role in VR research, and McGreevy’s work paved the way for the media to take notice of VR a few years later.

The term 'Virtual Reality' was coined by Jaron Lanier in 1987. In the 1990s, the media became fascinated with the concept of virtual reality, fueling widespread hype. Unfortunately, the hype led to unrealistic expectations about VR’s capabilities. As the public’s understanding caught up with the technology's limitations, interest in VR waned. The term virtual reality gradually fell out of favor, and today, VE developers avoid exaggerating the potential of VE systems or using the term 'virtual reality.'

Special thanks to Mary Whitton from the University of North Carolina at Chapel Hill for her invaluable help with this article.