Khronos projector

© 2010 Mytour.com

Khronos projector

© 2010 Mytour.comComputers have been in existence for over 50 years, but the way most people engage with them remains largely unchanged. The keyboards we use today have their origins in typewriters, a technology dating back nearly 150 years. In 1968, Douglas Carl Engelbart introduced a device that would later be known as the computer mouse [source: MIT]. The graphical user interface (GUI) has also been around for a significant time, with the Macintosh bringing it to the consumer market in 1984 [source: Utah State University]. Given the incredible advancements in computing power over the past five decades, it's surprising how little the basic interface has evolved.

In recent years, we're witnessing a shift away from the traditional keyboard-and-mouse setup. Touchscreen devices like smartphones and tablets, which have been around for over ten years, are bringing this technology to a broader audience. We're also creating more compact computers, which require fresh approaches to user interfaces. After all, you wouldn't want a full-sized keyboard attached to your smartphone — it would completely ruin the experience.

Touchscreens have introduced new methods for interacting with computers. Early versions could only detect a single point of contact, meaning if you touched the screen with more than one finger, it wouldn't track your movements. Today, multitouch screens are common in many devices. Engineers have leveraged this technology to create gesture-based navigation, allowing users to carry out specific tasks with predefined motions. For instance, many touchscreen devices, like the Apple iPhone, let you zoom in on a photo by placing two fingers on the screen and spreading them apart, while pinching the fingers together zooms out on the image.

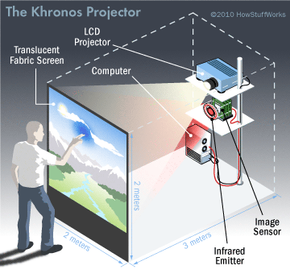

The University of Tokyo's Khronos Projector experiment merges touch-based technology with innovative methods for manipulating prerecorded video. The system includes a projector and camera mounted behind a flexible screen. The projector displays the images, while the camera detects tension changes on the screen. Users can interact with the screen by pressing on it, causing certain parts of the video to speed up or slow down while leaving the rest unchanged.

The Khronos Projector allows users to experience video in entirely new ways, adjusting both space and time. Imagine a video showing two people racing side by side. By pressing on the screen, you could make it appear that one person is leading the other. Moving your hand across the screen would switch their positions. What once followed one set of rules now follows a completely different set [source: University of Tokyo].

Interacting with screens is just the beginning. Soon, engineers are working on methods that will allow us to engage with computers without even touching them.

Hands-off Interfaces

Microsoft's Kinect accessory for the Xbox 360 allows players to enjoy video games without the need for a traditional controller.

Kiyoshi Ota/Getty Images

Microsoft's Kinect accessory for the Xbox 360 allows players to enjoy video games without the need for a traditional controller.

Kiyoshi Ota/Getty ImagesWhile some engineers are exploring new ways to interact with computers via touch, others are investigating how we can control them using sound. Voice recognition technology has made impressive strides since 1952, when Bell Laboratories developed a system capable of recognizing digits spoken by a single person [source: Juang and Rabiner]. Nowadays, devices like smartphones can convert voice messages into text with varying degrees of accuracy. Additionally, some applications already enable users to control devices with voice commands.

However, this technology is still in its early stages. We are still learning how to teach computers to recognize and differentiate between various words and commands. Yet, most of these applications operate within a limited range of sounds — mispronouncing a command may result in the computer ignoring it or executing the wrong action. Solving this problem is challenging, as training computers to process a wide range of sounds and select the best possible response is no simple task.

Other innovators are working on a completely different type of hands-free interface. Oblong Industries developed the g-speak interface. If you've watched the movie "Minority Report," the g-speak interface will look quite familiar. In the film, characters interact with images on a computer screen without physically touching the device. The g-speak system achieves this by using a series of sensors and cameras to detect and interpret a user’s gestures, translating them into commands for the computer. The user wears special gloves equipped with reflective beads, which the cameras track to understand the user’s movements.

The user stands in front of a screen or wall, where projectors display images that can be manipulated by moving hands through three-dimensional space. There's no need to translate commands into computer language or use a mouse in a plane perpendicular to the display – just move your hands to interact with the data [source: Oblong Industries].

Your interactions with computer systems could eventually become passive. Using radiofrequency identification (RFID) tags, you can engage with computer systems simply by being near them. This technology can serve harmless and enjoyable purposes, such as tracking your movements through a space to play your favorite music in each room or adjust the climate control to your preferences. Alternatively, it could be used for surveillance, tracking individuals as they navigate an environment.

It could even assist you in preparing meals. Imagine bringing home a variety of ingredients, each equipped with an RFID tag. Your home's integrated computer system detects the items and realizes you plan to make lasagna. Immediately, the system displays the recipe and asks if you'd like to preheat the oven. Does this feel like a futuristic utopia, or an Orwellian nightmare where stores track every purchase and build profiles on customers?

Or, you may not require RFID at all. Microsoft’s Kinect peripheral for the Xbox 360 uses cameras to scan the area in front of the entertainment center. When a user steps in front of the camera, the system maps their frame and face, creating a profile. From then on, whenever that person enters the frame, the system recognizes them. The profile can store personal preferences and skill levels, so you won’t have to worry about jumping into a game and immediately getting your character defeated.

Although Kinect's early uses are focused on gaming, social networking, and controlling media on your TV, future integrations with other computer systems are possible. Imagine sitting down at a computer, and it automatically adjusts to your preferences. Your favorite bookmarks appear, and the most used applications are at your fingertips. Then, when you leave and a friend sits down, the computer switches to their settings, offering them an entirely different experience.

One potential direction for user interfaces is to go directly to the brain.

I Think, Therefore I Compute

This man is playing pinball by using only his thoughts to control the paddles – is it possible we will soon be able to control computers through our minds?

Sean Gallup/Getty Images

This man is playing pinball by using only his thoughts to control the paddles – is it possible we will soon be able to control computers through our minds?

Sean Gallup/Getty ImagesYour brain operates electrically. Neurons, the nerve cells in your brain, communicate through tiny electrical signals. These electrical impulses travel through the dendrites and axons of your nervous system. Every action, whether conscious or subconscious, relies on these nerve cells sending specific electrical signals along the correct pathways.

If we manage to map brain signals, we could design a device that detects, interprets, and translates these signals for controlling external devices. This is what we call a brain-computer interface. Ideally, there would be no barrier between you and the computer – your thoughts would directly transform into commands.

However, this process is far more complex in practice. One challenge is detecting brain activity. Many systems use electroencephalography (EEG) to get a glimpse of what's happening inside your brain. The EEG involves a set of electrodes that must be placed at specific points on your scalp. This setup limits your movement and keeps you tethered to your computer. Moreover, an EEG doesn't provide the clearest signal – to obtain better results, electrodes would need to be implanted directly in the brain. This presents ethical dilemmas and restricts the depth of research in brain-computer interfaces.

Additionally, our brains are intricate, and we are easily distracted. Isolating clear commands from the background noise generated within our minds is a difficult task. It requires extensive effort to fine-tune an interface that enables the computer to distinguish actual commands from all the brain's static.

Programming computers to understand and translate brain signals into commands is also a challenging feat. So far, engineers have succeeded in developing interfaces that respond to basic commands. For example, researchers at the University of Southampton have created a system that enables people to communicate by thought. A participant thinks of an action, like raising their left arm, which represents a predetermined word, such as "zero." The EEG transmits the signals from the brain to a computer. The computer interprets these signals and encodes them as a message, which is sent to a lamp. The lamp blinks in a specific sequence. A second participant observes this sequence, and their EEG measures the brainwaves. A second computer decodes the information and interprets it as "zero."

A major downside of this system is that while the second participant receives the message, they cannot understand it on their own. Only with the assistance of a second computer can the message be interpreted. Nevertheless, this experiment may pave the way for future developments that will enable us to control computers and even communicate through mere thought.

Whether it's us doing the work—physically or mentally—or computers figuring out what we need by simply observing us, one thing is clear: the fundamental computer interface is evolving. Could it be that in a generation or two, the keyboard and mouse will be nothing more than relics displayed in a museum?